Last month I spent forty minutes with a coding agent tracking down why a service was silently swallowing errors. The fix was two lines. But along the way, I learned that the dependent service's documentation was wrong, that the error-handling pattern used across the codebase had a subtle gap nobody had noticed, and that a schema I needed lived in a repo no one on my team knew about.

I committed the fix. The knowledge it took to make the fix went nowhere.

Knowledge evaporating between work sessions is an old problem in software development.

AI makes it worse.

Sessions generate more knowledge now, most of which gets lost by default. The code gets committed. The reasoning doesn't.

The next person who hits this area pays the same forty minutes. The next agent might solve it in two minutes while burning through tokens, or spiral and never find it. Either way, it's repaying a cost that was already paid.

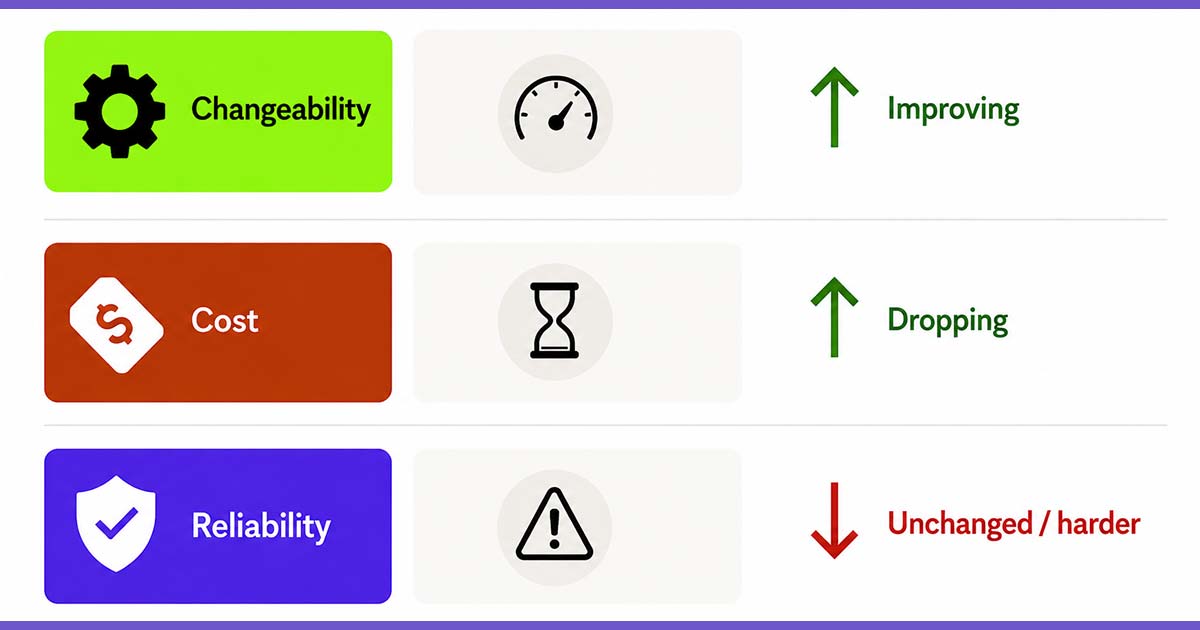

I've been using a simple practice to fix this. It's borrowed from test-driven development's (TDD) rhythm, and it works for the same reason TDD works: it separates concerns into phases so you can focus on one thing at a time. It's most useful when the work involves ambiguity, investigation, handoff risk, or a high chance of rediscovery—the kind of work where knowledge is expensive to acquire and easy to lose.

Red: Capture the problem

Before you start solving anything, create a context document. A simple markdown file works. Write down what you know about the problem: the goal, the requirements, the constraints, what's ambiguous, what you suspect but can't confirm. Include any supporting material in the same folder—screenshots, conversation excerpts, reference docs—anything the agent might need that doesn't fit in the markdown.

Then point your agent at it.

This can feel like overhead. But for the right class of problem—the ambiguous ones, the investigative ones—it's the step that prevents you from spending an hour building a clean solution to the wrong problem.

You and the agent align on what before either of you thinks about how. If the agent misunderstands the problem, you find out now, when it costs you a paragraph of clarification instead of a round of code review.

Green: Solve it, and keep a trail

Work the problem. As you go, keep the context document alive—have the agent draft updates while you curate what's worth keeping. Log the decisions made, approaches abandoned, and anything discovered that changes the shape of the problem.

This context pulls triple duty.

- It keeps you and the agent in sync as the work evolves.

- It gives you a record you can hand off if your session dies or a colleague picks the work up.

- It means the reasoning behind your changes exists somewhere other than your memory, which matters when someone asks "why did you do it this way?" in three months.

Don't worry about keeping it clean. It's a working document. That's what the next step is for.

Refactor: Remember and forget

You've solved the problem. You have a messy context document full of details. Most of them don't matter anymore. But some of them are knowledge you didn't have when you started—knowledge that someone will need again.

This is the step that actually matters. Ask two questions:

What did I learn that I didn't know at the start?

Where should that knowledge live so it gets found when it's needed?

Your agent can help here too—have it draft a summary of what was learned, then you decide what's worth keeping and where it goes:

- A dependent service's README was wrong: fix the README

- A schema's location wasn't documented: write it down somewhere discoverable

- You ran ten shell commands to diagnose an issue: turn them into a script

- You repeated a series of routine steps (migrate, regenerate, update changelog): make it an agent skill so next time it's a single command

- You made a design decision with real tradeoffs worth recording: write an ADR

Then trim or archive the rest. The dead ends, the intermediate states, the routine steps—keeping everything around just makes the signal harder to find.

This is about routing hard-won knowledge into the artifacts your team already relies on. Done badly, this turns into journaling. Done well, it turns private investigation into team memory.

On tooling

A markdown file in the repo is the simplest version of this. It travels with the code and any agent that clones the repo finds it without needing API access or extra tooling.

A GitHub issue gives you a collaboration surface—comments, handoffs, links to PRs.

Either works. Others would work, too. The platform matters less than discoverability and trust.

The payoff

The marginal cost of this is low. You're already doing the thinking, you're just giving it somewhere to land. And it builds on itself.

Each session leaves the system a little smarter than it was before: a better README, a new skill, a script that saves fifteen minutes next time. None of it is dramatic on its own. But a team that does this habitually builds a codebase that gets easier to work in over time, instead of one that slowly accumulates tribal knowledge in people's heads and then loses it when they move on.

The technique is simple. The discipline is the hard part. But if you've ever internalized the TDD loop, you already know how to make a phase-based practice into a habit. This is the same muscle.

Rick Reilly is a Staff Software Consultant at Test Double, and specializes in AI-assisted software development, developer coaching, and knowledge management for software teams.