AI coding assistants are remarkably capable—and remarkably fast at building the wrong thing.

AI doesn't have an accuracy problem. It has a target problem.

Your intent lives in your head. The AI sees a prompt, maybe some files, and takes its best guess. When that guess is wrong, you correct it. When it's right, you move on. But the why behind your decisions—the architectural constraints, the patterns your team follows, the business rules—those evaporate when the session ends.

Why most AI workflows break down

The typical AI-assisted workflow looks like this:

Plan → Implement → Write Tests → Fix What BrokeThis works for small tasks, but it's brittle for features. The AI has no target to iterate against. It's guessing what "done" looks like, and you're correcting after the fact.

The test-driven development (TDD) version is better:

Plan → Write Failing Tests → Implement Until GreenNow the AI has something concrete. "Make these 5 tests pass" is unambiguous in a way that "build a login flow" never will be.

But where do those tests come from? Usually, from a developer's solo interpretation of a plan. That's where edge cases slip through, scope creep starts, and "oh wait, what about..." shows up during PR review.

Tools like GitHub's Spec-Kit address this by formalizing spec creation with slash commands—constitution, specify, plan, tasks, implement. It's a compelling approach to making specs the primary artifact.

My approach is different: instead of a single spec pipeline, I use three documents and a structured conversation to produce the tests. The conversation is what catches the things a solo developer misses.

The process: Three Amigos with AI

The core of my workflow is creating two design documents, then using both to write acceptance criteria collaboratively before any code exists.

What is Three Amigos?

Three Amigos is a practice from agile and behavior-driven development (BDD) communities where three perspectives collaborate on a story before implementation begins. The term is generally attributed to George Dinwiddie, and the practice has been widely adopted in agile testing circles—Lisa Crispin and Janet Gregory's Agile Testing books are a good starting point if you want to go deeper. The idea is simple: get a product person, a developer, and a tester in a room before coding starts, and use structured conversation to establish shared understanding.

A related technique is Example Mapping—using color-coded cards for stories (yellow), business rules (blue), examples (green), and open questions (red). The key insight is that examples alone aren't enough—you need the business rules too.

The three perspectives:

- Product/BA: "What should it do?"—defines the business intent

- Developer: "How will it work?"—surfaces technical constraints and edge cases

- QA: "What could go wrong?"—identifies missing scenarios and boundary conditions

Each perspective brings a document to the table:

The BDD comes first—business rules before technical design. The TDD comes second—now that you understand what to build, surface how the system will handle it. Then the Three Amigos session uses both as inputs to produce the ACs.

The key value is that ambiguities surface before code is written, not during PR review or QA.

Three Amigos with AI

Here's the adaptation that works surprisingly well: you wear two hats, the AI wears three.

- You are the Product Owner (you know the business rules) and the Developer (you'll implement it)

- The AI adopts three domain-expert personas across phases:

This maps to the traditional Three Amigos roles, but adapted for a solo developer working with AI. You bring the domain knowledge and implementation context. The AI brings three distinct expertise lenses—the BA who thinks in regulations and consent mechanisms, the architect who thinks in service patterns and failure modes, and the QA engineer who tries to break everything.

The persona separation is intentional. The BA in Phase 1 deliberately avoids looking at the codebase—if the AI explores the code first, it unconsciously scopes requirements to what's easy to build. The Architect in Phase 2 deliberately dives into it. Different phases need different thinking.

A session has four phases:

Phase 1—Create the BDD (Business Design Document):

1. You explain the feature—the why and the what, not the how

2. Together you define business rules, user stories, and scope boundaries

3. The AI applies a BA Expert Lens—for each business rule, it cross-checks regulatory requirements, audits data privacy implications, specifies dependency failure scenarios, and ensures consent mechanisms and empty states are covered

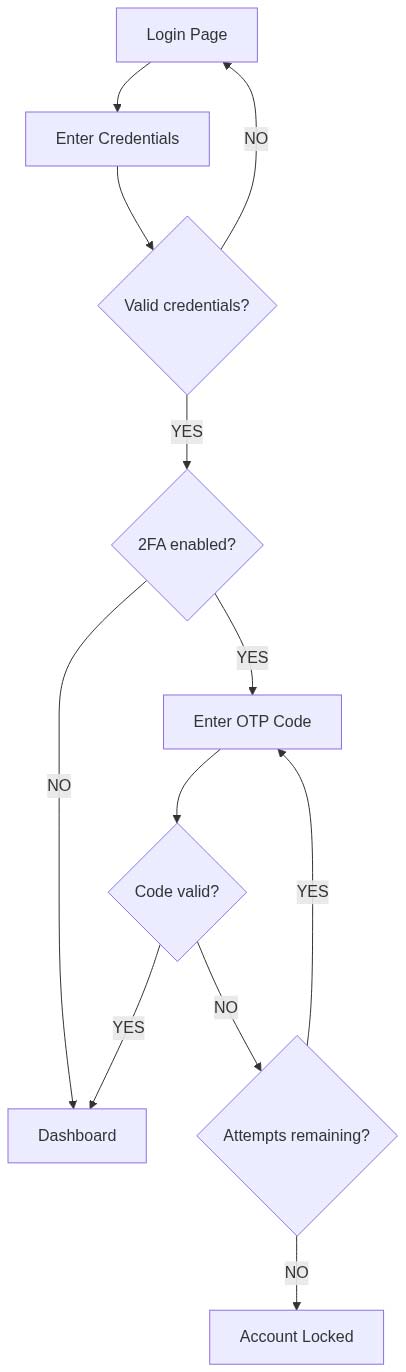

4. AI generates a flow diagram showing the user's journey through the feature

Phase 2—Create the TDD (Technical Design Document):

5. AI explores the codebase—finds existing patterns, constraints, reusable services

6. Together you identify technical constraints and integration points

7. The AI applies an Architect Expert Lens—checking framework conventions, PII/sensitive data design, reuse-before-create opportunities, integration point resilience (timeouts, retries, circuit breakers), and multi-process safety

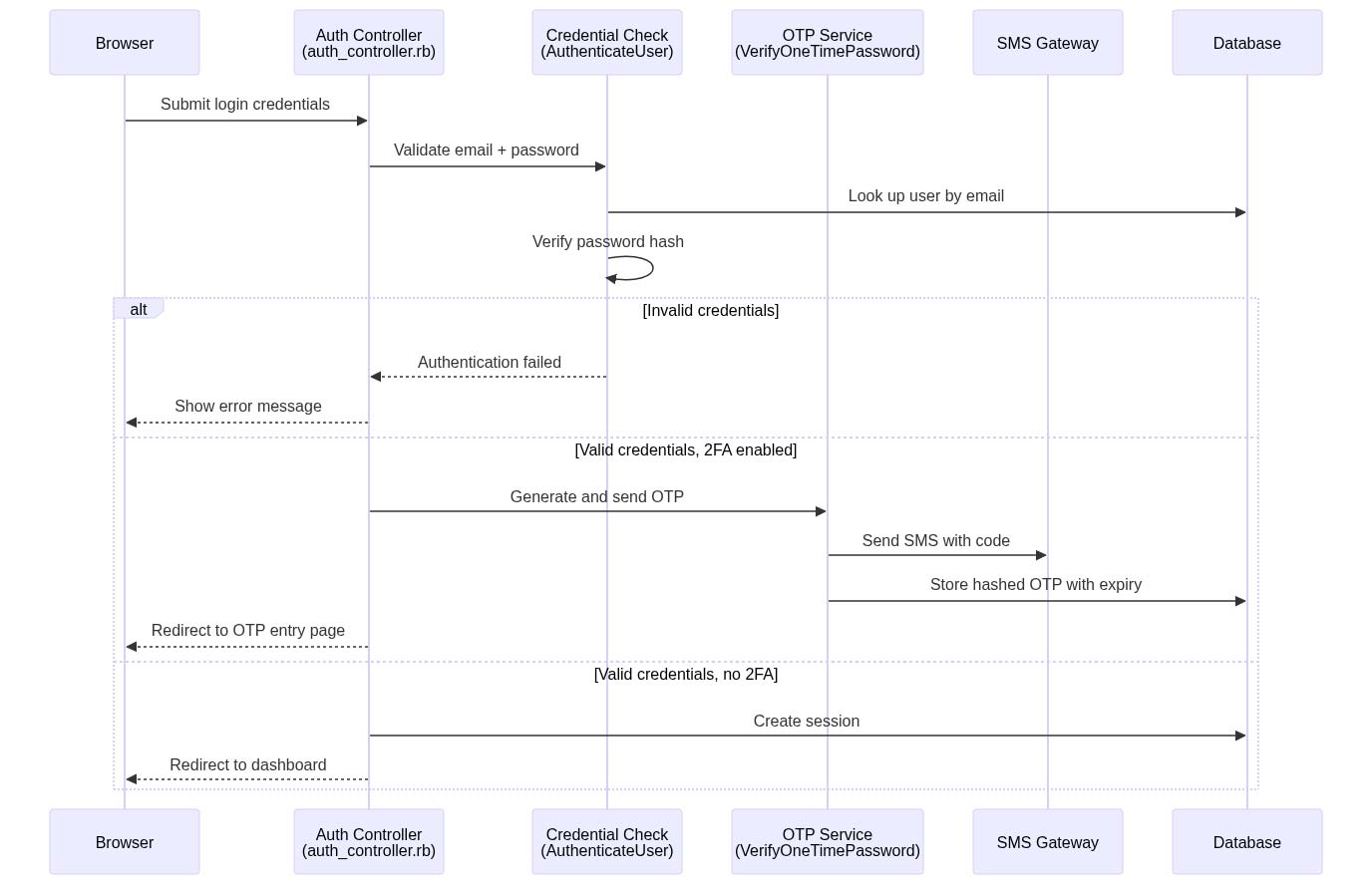

8. AI generates a sequence diagram showing system interactions with code breadcrumbs

Phase 3—Three Amigos session (using BDD + TDD as inputs):

9. AI plays QA Challenger—walks through both documents asking adversarial questions across 8 categories: happy path gaps, failure modes, edge cases, security boundaries, state management, existing user impact, scope boundaries, and BDD/TDD alignment

10. You answer or discuss—scope decisions get made now, not during implementation

11. Together you write Given/When/Then ACs derived from both documents, each tagged with a verification method (AUTO, MANUAL, or CODE)—these become the contract

Phase 4—Create artifacts:

12. Create a feature branch

13. Save all documents locally first—BDD, TDD, and ACs—with cross-reference headers linking them together

14. The ACs file gets a Quick Context section with copies of both diagrams, so you don't have to switch files during implementation

15. Optionally publish to a shared tool (Notion, Confluence, etc.) for team visibility

16. Then (and only then) you write code

The BDD ensures you're building the right thing. The TDD ensures you know how to build it. The ACs ensure everyone agrees on what "done" means. And local-first storage means the workflow works with nothing more than ~/.claude/ and markdown files.

How the three documents feed each other

The order isn't arbitrary. Each document builds on the last, and each prevents a specific failure mode.

BDD first—prevents technical bias. You define business rules without looking at the codebase. This is intentional. If you explore the code first, you unconsciously scope your requirements to what's easy to build. A feature might need "rate limiting for all API endpoints," but if you've already seen that the rate limiter only works for authenticated routes, you'll write a narrower requirement without realizing it. The BDD captures what should happen, not what currently happens.

The flow diagram is the BDD's most valuable artifact. Every diamond node is a business decision that needs at least one acceptance criterion later.

TDD second—surfaces gaps between intent and reality. Now the AI explores the codebase and finds what's actually there. This is where the interesting tensions appear:

- BDD says "store granular permission flags" → TDD says "the schema only has a single role enum"

- BDD says "rate limit all endpoints" → TDD says "the middleware only covers authenticated routes"

- BDD says "email notification on failure" → TDD says "there's an existing notification service we can extend"

These gaps become questions for Phase 3. Without the TDD, you'd discover them during implementation—when they're expensive to fix.

Three Amigos third—uses both as inputs. The AI walks through both documents as QA Challenger, and the conversation sounds like this:

QA (from BDD): "Business rule 2 says admins get email AND SMS notifications, but rule 3 says regular users get email-only. Why the difference?

You: "Regular users haven't verified a phone number yet. Admins are required to have 2FA with a phone."

QA (from TDD): "The sequence diagram shows 4 possible outcomes from the payment endpoint. What if the payment gateway times out? Does the user see a spinner forever?"

You: "30-second timeout, then redirect to a retry page with the order preserved."

QA (BDD vs TDD): "BDD says rate limit all endpoints. TDD says the middleware only covers authenticated routes. What about public endpoints?"

You: "Good catch. Add IP-based rate limiting for public endpoints. That's a separate AC."

Each answer becomes an acceptance criterion, tagged with a verification method:

AC-TIMEOUT-1: Given a payment request is submitted, When the gateway

does not respond within 30 seconds, Then redirect to retry page

with order preserved

Verification: AUTO

AC-RATELIMIT-2: Given unauthenticated requests to a public endpoint,

When the same IP exceeds 100 requests per minute, Then return 429

Verification: AUTO

AC-SECURITY-3: Given the old unprotected endpoint existed,

When the new rate-limited version ships, Then the old endpoint

is removed and returns 404

Verification: CODEThree verification types: AUTO (can be tested with an integration or unit test), MANUAL (requires browser/visual verification), and CODE (verified by code inspection—e.g., a removed endpoint or security hardening). This classification drives what happens next: AUTO criteria become failing tests, MANUAL criteria feed QA test plans, and CODE criteria go on a review checklist.

The result is a set of ACs that cover happy paths (from the BDD flow diagram), technical edge cases (from the TDD sequence diagram), and scope decisions (from the QA Challenge). No single perspective would have produced all three.

Who reads what

Not everyone needs every document:

The BDD is written in business language—no file paths, no service names. A product owner can read it and confirm "yes, that's what I want" without knowing the tech stack. The TDD is written for developers—every participant label in the sequence diagram is a file they can open. The ACs are the shared contract that bridges both worlds.

Diagrams that tell developers where to look

ACs define the what. Diagrams show the where—both in the user's journey and in the code.

The AI generates two Mermaid diagrams during the BDD and TDD phases—one per document:

Flow diagram—the user's journey through the feature. Every decision point maps to one or more ACs:

Sequence diagram—the system interactions behind the scenes, written in plain English with code breadcrumbs. The messages describe what's happening; the participant names and annotations tell developers where to find it:

The messages read like a story—"Submit login credentials," "Validate email + password," "Generate and send OTP." A product owner or QA engineer can follow the flow without knowing the tech stack. But for the developer, the participant labels are breadcrumbs: AuthenticateUser tells you the exact service class, auth_controller.rb tells you the controller file. You get the what and the where in one diagram.

Both diagrams render natively in GitHub PRs, Notion code blocks, and most markdown viewers. They live alongside the ACs in the local feature docs, giving reviewers the full picture without reading a line of implementation code.

The Given/When/Then format

Declarative specs are ambiguous. Gherkin-style ACs force precision:

Declarative (ambiguous):

User can log in with 2FA

Given/When/Then (precise):

Given a user with 2FA enabled submits valid credentials, When they enter the correct OTP within 5 minutes, Then a session is created and they are redirected to the dashboard Verification: AUTO

The second version makes preconditions, actions, and expected outcomes explicit. A developer can implement from it. A QA engineer can test from it. A product owner can sign off on it. And an AI can write a failing test from it without guessing. The verification tag tells you how it will be verified—this AC becomes a test, not a manual check.

Setting it up

Everything lives in ~/.claude/. Claude Code already uses this directory for commands, settings, and memory—you're just adding plans alongside them.

~/.claude/

├── commands/ # Slash commands

│ ├── new-feature.md # The four-phase Three Amigos process

│ └── implement.md # TDD loop driven by approved ACs

├── plans/ # Feature plans (BDD, TDD, ACs)

│ └── login-2fa/

│ ├── bdd.md

│ ├── tdd.md

│ └── acceptance-criteria.md

└── rules/ # Rules loaded into every session

└── workflow.md

You don't need all of this to start. The minimum viable setup is a single command file—~/.claude/commands/new-feature.md—that encodes the four-phase Three Amigos process. Everything else layers on as you find friction points worth automating.

Plans

The three documents from each feature live in ~/.claude/plans/[feature-name]/. This keeps them where Claude Code can read them directly—no integrations needed. If you want team visibility, publish them to Notion, Obsidian, Confluence, or wherever your team already works. The local files are the source of truth.

Every plan follows this template:

This maps directly to the three documents: Context + Constraints + Examples feed the TDD. Intent feeds the BDD. Acceptance Criteria are the output of the Three Amigos session that uses both as inputs.

Writing forces clarity. A vague idea like "add 2FA" becomes specific:

- Which users need 2FA? (those with phone numbers on file)

- What happens if they fail? (redirect to login with error)

- What existing code should we follow? (the password reset flow pattern)

- How will we know it's done? (twelve Given/When/Then acceptance criteria)

This takes 15-30 minutes. It saves hours of back-and-forth with the AI and catches edge cases before a single line of code is written.

From ACs to code

This is where the planning pays off. Once the tests pass, they become the documentation—executable, can't lie, stays current. The plan was scaffolding. You run /implement login-2fa, and it reads the plans from ~/.claude/plans/, scopes work by the TDD's build sequence, and drives the loop: failing test, implement, commit—one AC at a time.

Here's the key insight: your acceptance criteria are your AI prompt. Since plans live in ~/.claude/plans/, the AI reads them directly—no integrations needed. Each Given/When/Then AC maps to a test case:

context "when a user with 2FA enters a valid OTP" do

it "creates a session and redirects to the dashboard" do

result = verify_otp(user: user_with_2fa, code: valid_code)

expect(result.session).to be_present

expect(result.redirect_path).to eq("/dashboard")

end

endNo interpretation needed. The AC is the test specification. The test name describes the behavior—anyone reading the spec can understand what it verifies without referencing the plan.

The implementation loop is:

- Scope PRs from ACs. Group ACs into shippable slices using the TDD's build sequence—it's already ordered by dependency. Primary path ACs ship first, guard rails and enhancements layer on top.

- Write failing tests. For each AC tagged AUTO, write a failing test from the Given/When/Then spec.

- Implement until green. The AI has an unambiguous target: make these specific tests pass. The TDD tells it which files to create or modify and which existing patterns to follow.

- Commit and repeat. Next AC, next test, next commit. The build sequence tells you what order.

Anthropic's own documentation hints at why this works: "Claude performs best when it has a clear target to iterate against—a test case, visual mock, or other output." Acceptance criteria are the clearest target you can give it.

The tooling

I encode this entire workflow into Claude Code commands, skills, and agents so I don't have to explain the process every time. Here's what a feature looks like end-to-end:

Start the Three Amigos session:

/new-feature Add two-factor authentication to the login flowThis kicks off the four phases. The command encodes everything—the persona switches, the expert lenses, the diagram generation, the QA Challenger categories. I just answer questions and make decisions. When we're done, the BDD, TDD, and ACs are saved to ~/.claude/plans/login-2fa/ and a feature branch is created.

During Phase 2, agents do the codebase exploration. The AI launches three specialized agents in parallel—a codebase analyzer that traces how existing code works with file:line references, a codebase locator that finds where relevant code lives, and a pattern finder that surfaces existing implementations to reuse. These feed the TDD with real codebase knowledge instead of assumptions.

Implement the approved plan:

/implement login-2faThis reads the plans, scopes work by the TDD's build sequence, and drives the TDD loop—write a failing test for the next AC, implement until green, commit, repeat.

Check coverage anytime:

/test-gapsThis maps your ACs to actual test files—which ACs are covered, which are partially covered, which have no tests yet. It auto-detects your test framework and searches by behavior, not by AC IDs.

The commands are just markdown files in ~/.claude/commands/. The agents are markdown files in ~/.claude/agents/. You can read them, modify them, add your own. There's nothing magical—they're prompts with structure.

I've published the core workflow as a Claude Code plugin you can install with one command:

claude plugin install andrewvida/three-amigos-with-aiOr start from scratch with just ~/.claude/commands/new-feature.md and build your own version. The process matters more than the tooling.

When this breaks down

The Three Amigos + TDD workflow isn't always practical:

- Exploratory work—Sometimes you need to spike before you know what to test

- UI-heavy features—Visual correctness is hard to capture in Given/When/Then assertions

- Tight deadlines—Writing ACs first feels slower (even though it's usually faster overall)

- Solo context—The QA Challenger works best when you engage honestly with the questions instead of dismissing them

For these cases, I fall back to lighter-weight planning and manual verification. But I recognize I'm trading precision for speed.

The point

The tooling doesn't matter. The process does.

AI doesn't need to be slower. It needs a better target. The best targets come from structured, adversarial collaboration—not from a developer's solo interpretation of a ticket.

A Business Design Document forces you to define business rules before touching the codebase. A Technical Design Document surfaces constraints before writing acceptance criteria. A Three Amigos session forces you to think about what could go wrong before you build. Given/When/Then acceptance criteria give the AI an unambiguous contract. Tests make that contract executable. Persistent context files ensure your principles survive across sessions.

The order matters: business rules first, technical design second, acceptance criteria third. Each document builds on the last. Skipping straight to ACs without the business design means your acceptance criteria inherit the developer's assumptions. Skipping the technical design means your ACs ignore the codebase's actual constraints.

AI will keep getting faster. The question is whether it's building the right thing. The difference is a 15-minute conversation with an AI playing devil's advocate—before the first commit, not after the fifty-seventh.

The Three Amigos practice is generally attributed to George Dinwiddie. For more on agile testing practices, see Lisa Crispin and Janet Gregory's Agile Testing books and Crispin's post on Example Mapping.

Andy Vida is a Senior Software Consultant at Test Double who helps teams build the right thing with AI—not just faster, but with more precision, purpose, and quality.