The software that propels a business and the software that eventually holds it back are often the same thing. Systems don't become legacy by failing. They become legacy by succeeding long enough to accumulate the weight of every decision, shortcut, and adaptation made along the way. That weight is earned. At some point, though, legacy software starts costing more than it's worth.

We've helped enough organizations navigate that moment that we've gotten precise about what's actually happening. And lately, a new question keeps surfacing: in a world where AI can read your codebase in seconds and write new features in minutes, does "legacy" even mean the same thing anymore?

The answer is both yes and no. What makes a system legacy has changed, and what it takes to modernize one has changed too. But the shift is worth working through carefully, because not everything has changed equally, and getting that wrong leads businesses to solve the wrong problem.

What legacy code looked like before AI

Legacy software was always easier to recognize than to define. You'd open a codebase and see five-hundred-line functions, multi-thousand-line files, no tests, and a CI pipeline that half the team had stopped trusting. You'd hear users complaining about slow load times, intermittent errors, and features that broke when you touched something on the other side of the system. Or you'd hear the business’s side of the story: a feature addition that used to take a week now takes six months, and nobody can tell you why.

When I think about what actually makes a software system legacy, three things show up consistently, but they show up differently depending on who's describing them. End users feel it as friction. Engineers feel complexity, causing their momentum to slip. The business feels costs climbing. Same software system, three different experiences of the same underlying decay.

The user's legacy software signal: Friction

Users don't think in terms of legacy software. They think in terms of whether the system helps them get something done or whether it gets in the way. That gap between what a user needs and what the system delivers is friction, and it tends to grow quietly over time.

Part of what drives that growth is a structural imbalance in how most software teams operate. Teams are generally excellent at translating new ideas into features. They are much worse at removing the features nobody uses. The result is a product surface that expands in one direction indefinitely. More options, more paths, more complexity—without anyone asking what could be safely cut. Most teams aren't even measuring which features users actually rely on, which means they can't answer that question even when they want to.

That accumulation has real consequences. Bloat slows systems down. Broader surface area creates more places for inconsistencies and defects to hide. Performance degrades not because anything broke, but because the system is now carrying far more than it was designed to carry. Users feel all of this before anyone can explain it technically. The product starts to feel heavy, unpredictable, and hard to navigate. They don't file bug reports. They quietly lose confidence in the system and eventually route around it or stop using it altogether.

The software engineer's legacy software signal: Momentum

The best signal to a software engineer that software is now legacy has nothing to do with whether the code is "clean" in some aesthetic sense. What matters is whether a developer who's new to this part of the system can come in, understand what's happening, make a change with confidence, and get it out the door without holding their breath. That's momentum. And it comes from more than just the codebase.

The code itself matters, but internal consistency matters more than idiomatic purity. A system doesn't need to match some community ideal of how a language or framework should be used. It needs to be coherent for its audience of engineers. Consistent abstractions, legible intent, patterns that a new engineer can learn once and apply everywhere. A system with its own clear internal logic is far easier to move fast in than one that follows best technical practices but shifts approach every few hundred lines.

The infrastructure around the code plays a huge role in momentum as well. Can a new engineer get a change into production in their first week? That question reveals a lot. Not just about the codebase, but about the test suite, the CI/CD pipeline, the deployment process, and the team’s discipline around keeping all of this useful. A well-functioning pipeline runs automatically, validates that the system works as designed, and promotes changes to production without requiring anyone to manually shepherd each step. When that's in place, engineers have momentum. When it's not, every change becomes an exercise in anxiety management, regardless of the cleanliness of the code.

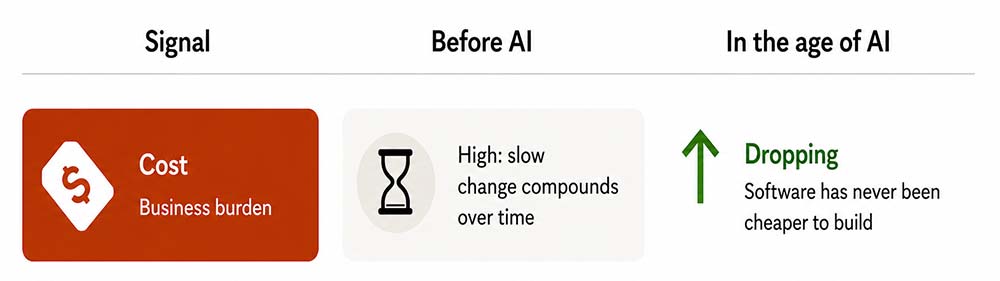

The business's legacy software signal: Expense

Legacy systems are expensive not just because they're slow to change, but because that slowness compounds. Every feature added without pruning the ones nobody uses makes the next change harder. Every sprint without clear visibility into what users actually value adds cost without adding confidence. The system grows heavier and more opaque over time, and the bill for each incremental change keeps climbing while the return on that investment becomes harder to justify.

How the team is organized matters too, and it shows up directly in the finances. Small teams move faster. The communication overhead of adding people to a project is real; more people means more coordination, more differing perspectives, more redundant effort. A team of two spends the vast majority of their week actually delivering software and devotes less of their time on supportive functions. A team of 100 may have that ratio inverted. Organizational structure doesn’t just affect culture, it affects the cost of every change made within the system.

The tipping point for legacy software

Most clients reach out to us when the math has stopped working. When something that used to take a week now takes months, and the team can't tell you exactly why. The answer is usually distributed across all three of these dimensions at once: users are losing patience with a system that gets in their way, engineers are losing momentum, and the business is losing tolerance with the bill.

What AI changes for legacy software

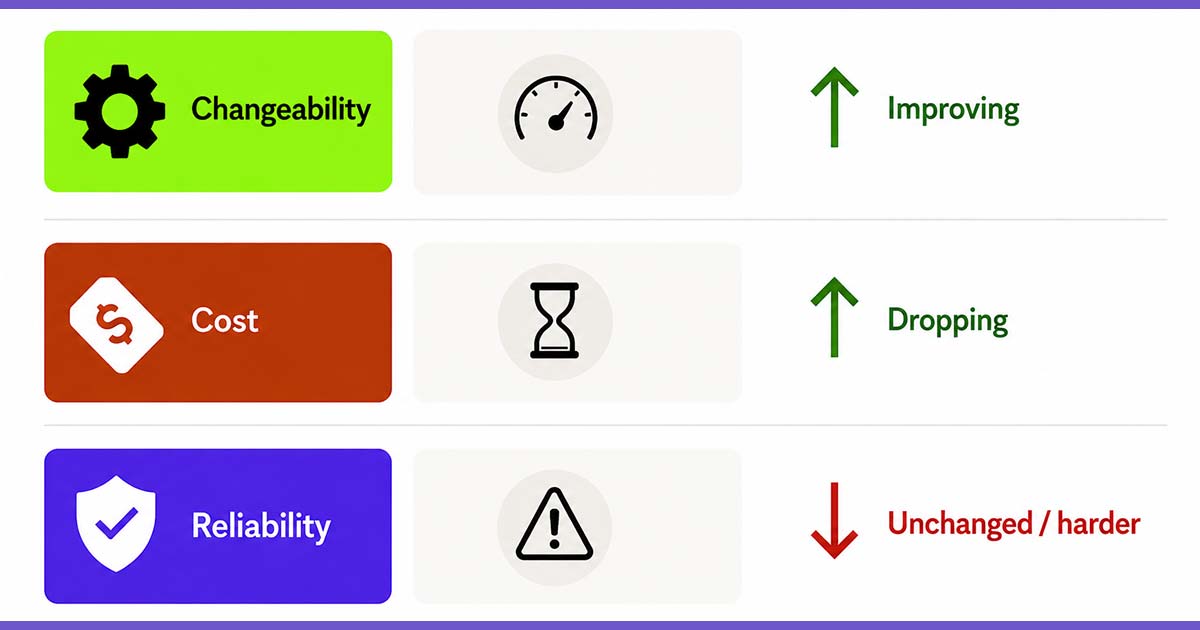

Two of the three factors that have historically defined legacy are becoming less acute problems in the age of AI.

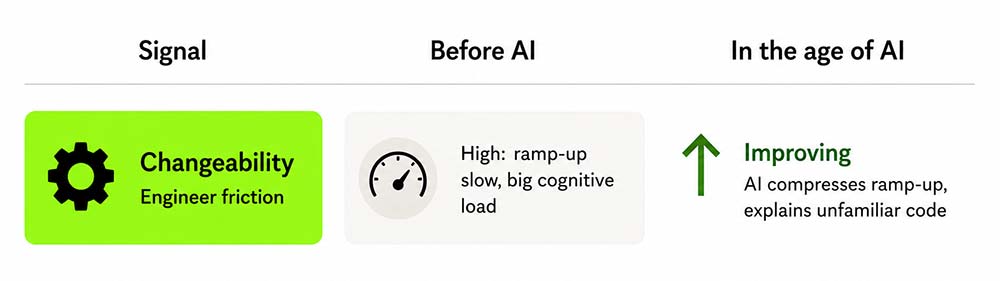

AI-empowered developers are meaningfully faster at ramping up in unfamiliar codebases. The cognitive overhead of navigating complexity drops when you have a tool that can summarize, explain, and trace behavior on demand. One of our consultants coming in cold to a large, sprawling system can now get productive—understanding how it works, identifying where changes need to happen, and shipping those changes—in a fraction of the time it used to take. Engineers who once would have spent weeks finding their footing can now find it in days.

The cost of software development is changing too. Building new functionality, testing ideas, fixing defects—all of it is cheaper than it's ever been. "This is too expensive to maintain" is a weaker argument for abandoning a system than it used to be.

What AI doesn't change, and may be making worse

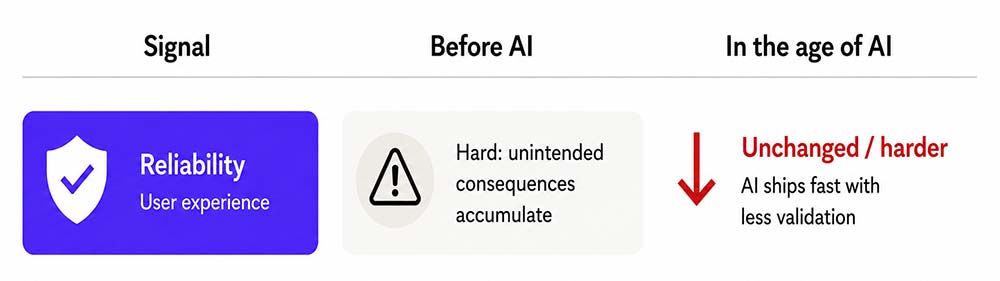

Unintended consequences haven't gone anywhere. And the friction that users experience within a system is still the thing that catches up with you.

If anything, the pace at which software is being built has accelerated beyond what our collective discipline has kept up with. Developers who trust AI-generated code without rigorously validating it are building systems that look fine on the surface and break in surprising ways under real conditions. The lure of the tooling is exactly this: things move fast, tests pass, it ships—and then something unexpected happens in production, and it's not obvious why.

Feature bloat is also a more difficult problem than it has ever been. When AI can produce new functionality in hours, the already-weak discipline around pruning what nobody uses gets even harder to enforce. The product surface expands faster than it ever has, and even the most mature teams struggle to evaluate whether any of it is actually worth keeping.

The risk isn't that AI makes legacy software impossible to work with. The risk is that AI helps us build legacy software faster than we ever could before, with greater confidence than we should have. The friction users experience hasn't gotten easier to get right. It may be getting harder to notice until it's too late.

In the next post in this series, we'll get into what it actually looks like to remediate legacy systems with AI tooling—where AI helps, where it creates false confidence, and how we approach these engagements at Test Double. After that, we'll tackle the harder question: how do you build systems today that don't become legacy tomorrow?

Todd Kaufman is the CEO of Test Double, a 100% employee-owned software consulting company that has been remote since 2011. Test Double helps organizations solve complex technical challenges through people, process, and technology consulting.