When engineers talk about observability, we usually mean systems. Logs, traces, metrics, dashboards. Enough signal to figure out what the software is actually doing once it leaves the whiteboard and starts misbehaving in production.

Organizations have the same challenge.

I've been calling the idea organizational observability: the degree to which an organization's intent is sufficiently visible and coherent for people to make good decisions within it.

That includes the obvious things like goals and strategy, but also the more important things that usually get lost between slides and status meetings: historical decisions, operating principles, architecture boundaries, tradeoffs, and how all of that changes over time.

A KPI by itself is not organizational observability. An OKR deck is not organizational observability. A task tracker full of tickets is definitely not organizational observability.

Those things tell you what is being measured, managed, or shipped. They rarely tell you enough about why, how, or what must not be broken along the way.

Context tarpits

In 1975, Fred Brooks described the tar pit in The Mythical Man-Month: the place where great projects sink because of the sheer friction of complexity and uncoordinated effort. Great and powerful beasts, thrashing themselves to death in the slow goo.

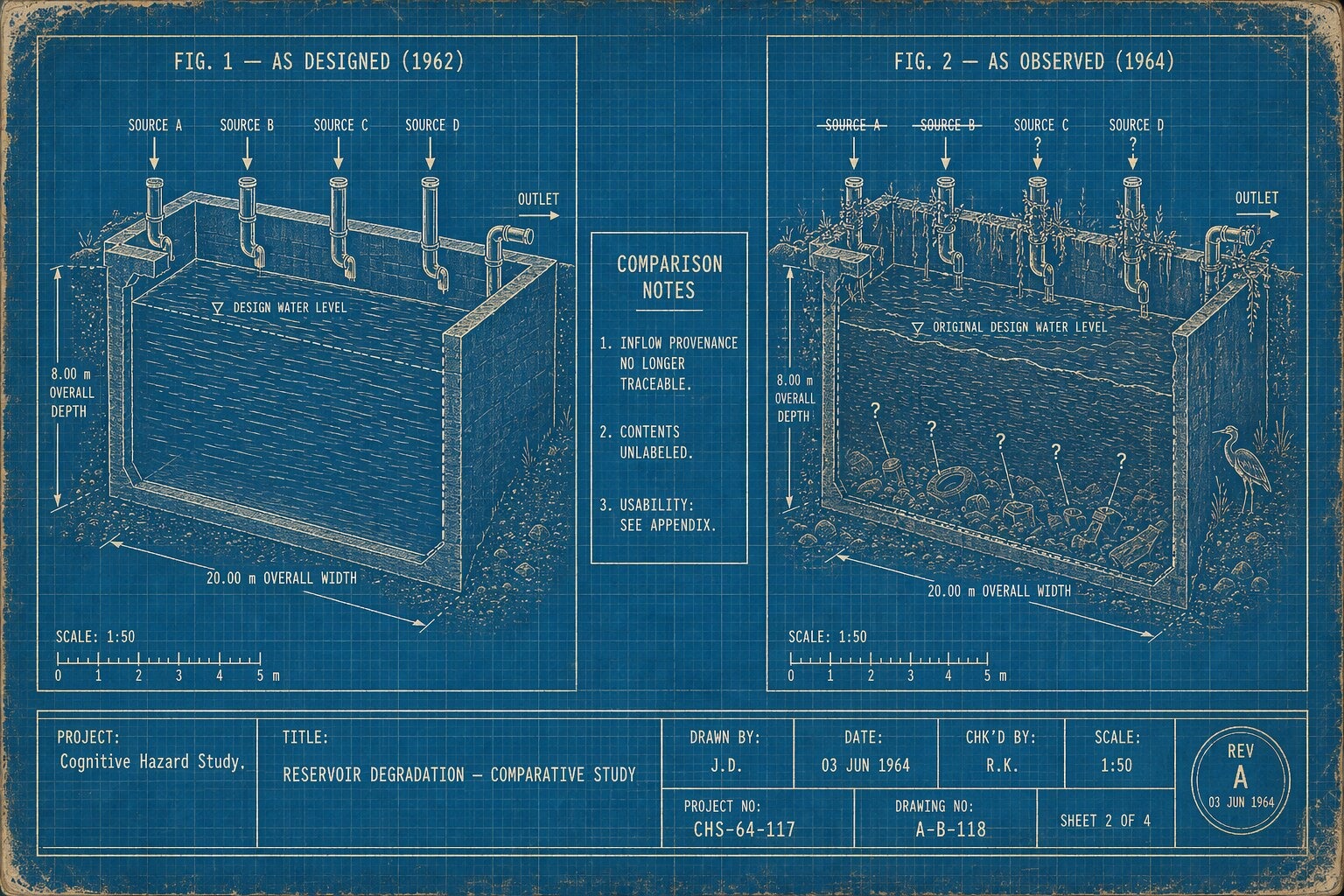

We've been evolving that struggle ever since. The last decade gave us data lakes—centralized stores that were supposed to solve the fragmentation problem by making everything available. In a lot of organizations, they became data swamps: technically all there, practically unusable, because nobody preserved the context needed to know what any of it meant or whether it could be trusted.

Now we are doing the same thing with our strategic intent. I've started calling these places context tarpits: the spots in a company where context goes to die. Sometimes it lives in one person's head. Sometimes it's scattered across six systems that decay at six different rates. Sometimes it's technically documented, but only in stale, contradictory, or confusing forms—which is arguably worse, because outdated context feels authoritative right up until it leads to a bad decision.

Intent goes in. Coherent judgment struggles to come out.

How good ideas die in a tarpit

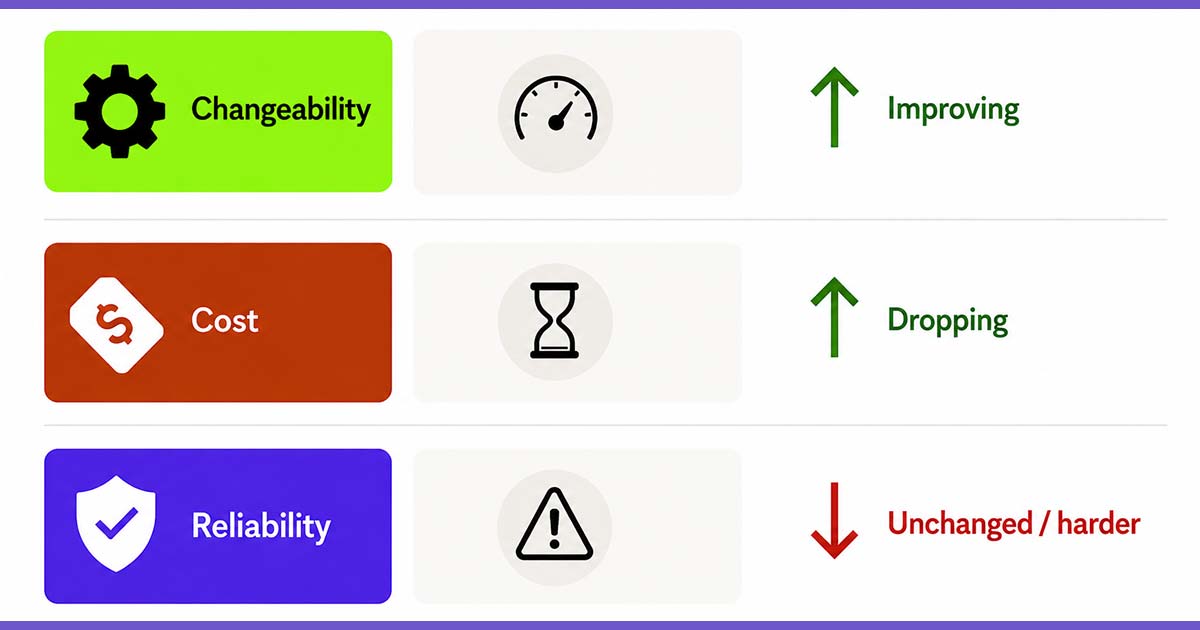

Much of the current agentic discourse is focused on the visible machinery: prompts, harnesses, evals, context windows, tools, MCP servers, and all the other wiring. Some of that is genuinely useful, and organizations should allocate time to experiment with all of it.

But my read is that many teams are trying to bolt agents onto organizations whose intent is still scattered across Slack, Notion, Google Docs, GitHub, meeting notes, and the heads of a few long-tenured people who have become the unofficial index of how the place actually works.

I’ve run into this a few times, long before anyone started calling everything agentic. In one case, the team was deciding what to build next quarter, and one proposal on the table was PowerPoint exports for analytics.

In isolation, the idea seemed sensible. You could imagine customers wanting it. You could imagine shipping it in a quarter. You could imagine it looking like "progress."

The problem was that it sat in obvious tension with where the company was actually trying to go. At the time there was growing investment in machine learning, better analytics, and making the experience more intelligent. In that context, PowerPoint exports looked less like strategy and more like a very tidy locally optimized win that was not connected to the broader outcome the company was seeking.

Looking back, the pattern stuck with me because it made something visible: the issue was not whether a product person had a good or bad idea. The issue was that the company's intent was not sufficiently legible at the point of decision.

If a human in that meeting could fail to recognize the strategic misalignment, why would we expect an agent to do any better?

An agent in that scenario could not have caused the misalignment. But it would absolutely inherit it.

Context management is people management

The temptation is to treat all of this as an AI problem. It isn't. The same tarpit that traps an agent traps a human—the difference is that humans have developed coping mechanisms. A seasoned manager compensates for missing context through relationships, hallway conversations, tenure, and intuition. They "know" that a specific KPI is a vanity metric, or that a certain project is a tripwire they shouldn't touch.

Agents don't have any of that. If you give an agent a task and fail to provide the broader context, it will happily work within that local maximum with a kind of ruthless sincerity.

The lesson from the agent era is that context management is people management. The same clarity that gives an agent a chance of staying aligned is what lets a new hire exercise good judgment. If your organization isn't legible to a machine, it is probably not nearly as legible to your humans as you think.

Legibility, not exposure

Assume for a minute that the solution is to expose everything.

You can feel the temptation, especially right now. If lack of organizational context is the problem, then surely the fix is to shovel more context into the system. More docs, more retrieval, more integrations, more access to more places.

This is the data lake mistake all over again.

Visibility is not the same thing as understanding. Someone can be drowning in context and still be unable to see how the system works.

The goal is not maximal exposure. It is legibility.

By legibility, I mean something broader than raw access. The question is not just whether the information exists. It is whether a person or agent can find what matters, understand why it matters, trace how it changed, and act without wandering off into the next tarpit.

That is also why I don't want humans reduced to being "in the loop" as glorified approvers of machine output. I want humans in the lead. I want workflows that use AI to help capture, curate, and act on context while still preserving a person's ability to understand the system and direct where it goes.

So how would you measure organizational observability? I'd start by looking at how effective a company is at onboarding a new employee. How long does it take a new person to understand not just the tools and the org chart, but why the company works the way it does? How many systems do they need access to before they can make a good decision? How often does the answer to a basic strategic question still boil down to "you need to ask Carl"?

If onboarding a human is slow, fragile, and full of manual context reconstruction, onboarding an agent will fail in the exact same ways.

Evals inherit the same blind spots

This is one reason I'm skeptical when someone claims that evals are all you need.

Evals are useful. You absolutely want them. But an eval can only check against intent the organization has bothered to make explicit.

If you deploy an agent against a narrow task and evaluate it only within that narrow frame of reference, you can end up with something that scores well and still yields a bad outcome. The answer will be technically correct, and the resulting outcome organizationally wrong.

This has a compounding effect:

- fragmented context makes good evaluation harder

- weak evaluation rewards local correctness

- local correctness gets mistaken for alignment

- and then everyone acts surprised when the broader outcome is off

We keep looking for the mistake in the model, the prompt, or the harness. A lot of the time the upstream failure is that the organization never made its own intent observable enough to evaluate against in the first place.

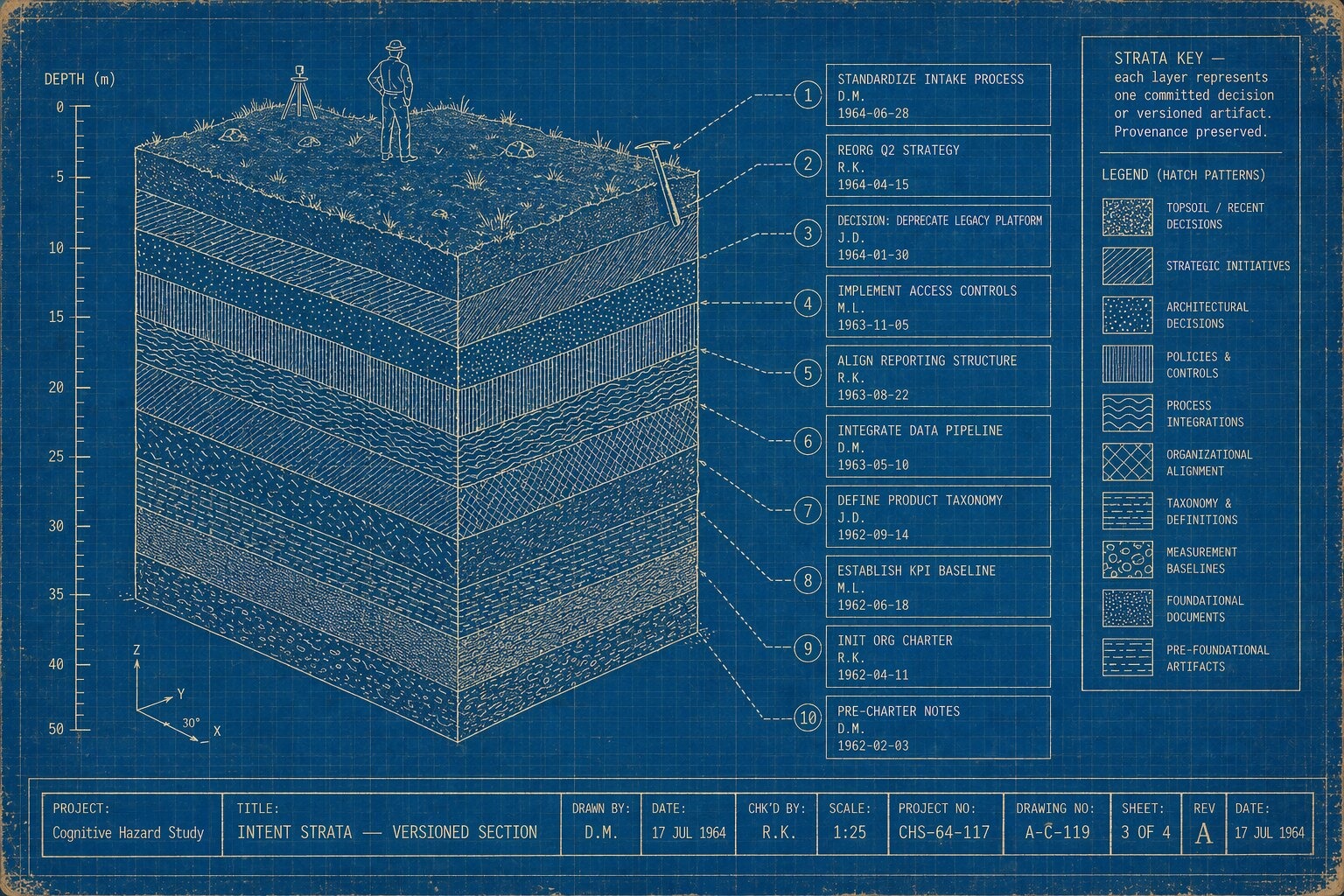

Building a control plane for intent

What does the fix look like?

For most organizations, the first version is simple. Build a unified, versioned home for organizational intent. Strategy docs. Architecture diagrams. ADRs. Business decision logs. Operating principles. System maps. Changelogs. The historical reasons things are the way they are — not just the current snapshot for Q2.

In practice, that probably means markdown in git.

That may sound underwhelming compared to the dream of some magical knowledge layer, and with RAG all the rage I get why it might. But markdown + git gets you a lot for free: diffs, provenance, auditability, change history, and a format that is natively legible to both humans and LLMs. More importantly, it forces a commit discipline the data swamp never had. Every change has an author, a message, and a moment in time.

If your core context still lives in Google Docs or Confluence, you are obscuring line of sight to intent for both humans and agents.

For larger organizations, a single repository is not realistic. The next step might be an information gateway that federates across systems without forcing people to spelunk through a swamp of tools every time they need context. But even then, I suspect most orgs should start with git and markdown before dreaming about something fancier.

If I were advising a leadership team, I’d start with four questions:

1. Where does intent live today?

Not the polished version. The real one.

2. Where does context have to be manually reconstructed?

Those are your tarpits.

3. How hard is it to onboard a new person into meaningful judgment?

Not just tool access. Judgment.

4. Are goals being paired with reasoning and constraints, or just handed down as output targets?

If people inherit targets without the reasoning behind them, local optimization is almost guaranteed.

Treat this work like infrastructure, not documentation theater. The point is not to create more documents. The point is to make better decisions easier.

The deeper constraint

We talk a lot right now about AI prompts, workflows, agents, evals, and autonomy.

Beneath all of that, there is a more fundamental truth: if an organization cannot articulate its own intent, no amount of agent sophistication will solve the AI alignment problem.

And if it can articulate intent—if it can surface it, structure it, version it, and make it easier to traverse—then it is doing something more important than building better AI systems.

It is building better cognitive infrastructure for the organization itself.

Dave Mosher is a Principal Consultant at Test Double, and has experience in legacy modernization, agentic coding, and explaining CORS poorly to people who didn't ask.