Part three of a three-part series on agentic coding and what it means for the profession. Part one set the stage. Part two looked at what changes in how we work. This one follows the logic to where it leads. If you think I'm wrong, start with part one.

I want to make a prediction that is going to make a lot of people uncomfortable.

The programming languages, frameworks, and abstractions we've spent decades building are going to start collapsing. Not all at once. Not overnight. But the direction is clear, and the pace is faster than most people want to admit. Within a decade, possibly sooner, the tools we use to build software will look fundamentally different from what they look like today. And the driving force behind that transformation isn't a better language. It's the fact that the primary author of code is changing.

Let me take you through how we got here, because the destination only makes sense if you understand the journey.

We built languages for humans

In the beginning, there was the machine. If you wanted to tell a computer what to do, you talked to it in its own language. Assembly. Binary. You managed registers directly. You tracked memory addresses by hand. You kept the hardware's mental model in your head at all times, because the computer wasn't going to meet you halfway.

It worked. It was brutally effective. And it was completely inaccessible to most humans, which created a ceiling on how much software the world could produce and how fast.

So we built abstractions. FORTRAN gave scientists a way to express mathematical formulas without thinking about machine instructions. COBOL let business programmers write something that almost read like English. C gave systems programmers a way to work close to the hardware without living in it entirely. Each of these was a deliberate trade: we gave up some efficiency, some directness, some closeness to the metal, in exchange for something a human could approach, learn, reason about, and maintain.

The trade kept going. With each generation, the abstraction layer got thicker. C++ brought object-oriented programming to systems work, letting developers model the world in terms of objects and relationships rather than memory and pointers. Java took that further and threw in garbage collection, "write once run anywhere" portability, and a type system designed to keep programmers from shooting themselves in the foot. Python traded explicit typing and raw performance for readability so clean that it became the first language many people learn today.

And it didn't stop at languages. We started building frameworks on top of languages. Spring on top of Java. Rails on top of Ruby. Django on top of Python. React on top of JavaScript. Then frameworks on top of frameworks. Abstractions on top of abstractions. Each layer hiding more of the machinery beneath, each layer letting developers work with higher-level concepts rather than lower-level mechanics.

We called all of this progress. And by one measure, it genuinely was. The number of people who could build software exploded. The speed at which applications could be developed accelerated dramatically. Ideas that would have taken a team of specialists years to implement could be built by a small team in months.

But every single one of these abstractions was built to solve the same underlying problem: humans have limited cognitive bandwidth, and raw machines don't care.

The frameworks didn't exist because they made the software better. They existed because they made the software more approachable. More writable. More readable. More maintainable by the humans who had to work with it. Every layer of abstraction was a subsidy paid to human cognition, funded by hardware performance and infrastructure complexity.

The bill we've been running up

There's a cost to all of this that we don't talk about enough, because for most of the last fifty years it felt like the right trade.

All those abstractions require hardware to compensate. Interpreted languages are slower than compiled ones. Garbage collection introduces latency. Layers of framework indirection add overhead. Virtual machines and containers and cloud infrastructure exist in large part to give us enough headroom to run software that is, by any objective measure, far less efficient than it could be if humans weren't the ones writing and reading it.

We bought human approachability with CPU cycles, memory, and kilowatt hours. At scale, that bill is enormous. We've built data centers full of hardware whose primary job is to make up for the inefficiency introduced by decades of abstractions designed around human cognitive limits.

Again: that was a reasonable trade. The alternative, keeping everything close to the metal, would have meant far less software, far fewer developers, far slower progress. The abstraction tax was worth paying.

The question now is whether it still is.

The assumption that's breaking

Every layer of abstraction in our stack rests on the same assumption that the last post in this series examined from a different angle: that a human is on the other end.

Python is readable because humans need to read it. Rails is opinionated because humans benefit from convention. TypeScript added types to JavaScript because humans make fewer mistakes with guardrails. React's component model exists because humans think in composable, reusable pieces.

Take the human out of the picture and most of these justifications evaporate.

Agentic coding systems don't need readable syntax. They don't benefit from opinionated conventions designed to constrain human error. They don't struggle to hold a complex dependency graph in their head. They don't need the language to express intent at a human-comfortable level of abstraction, because their level of comfortable abstraction is simply different from ours.

They can read assembly. They can reason about memory layouts. They can track register states. They can optimize at a level of detail that would exhaust a human developer in minutes.

And as they become the primary authors and maintainers of code, the rationale for building languages and frameworks around human approachability starts to weaken.

What comes next

I expect the next generation of programming tooling to start optimizing in a different direction.

Not away from human involvement entirely. Humans will still direct, review, understand at a system level, and take responsibility for what the software does. But the layer where code actually gets written and maintained will increasingly belong to agents. And the tools built for that layer will be built for agents, not for us.

That means languages optimized for processing efficiency rather than human readability. Frameworks that minimize abstraction overhead rather than hiding complexity from developers. Compilation targets that don't need to accommodate the cognitive limits of the person writing the code, because the entity writing the code doesn't have those limits.

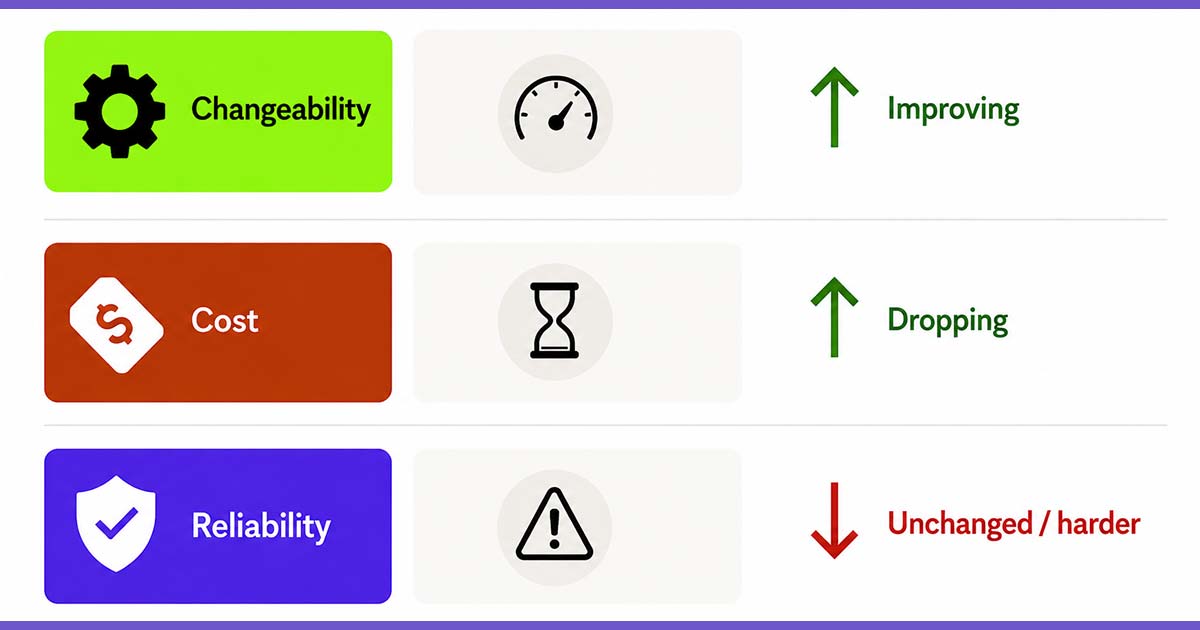

In practical terms: I expect we'll see a gradual movement back toward the metal. Not a sudden abandonment of Python and JavaScript and the frameworks we've built on them. But over the next decade, as agentic coding matures, the competitive advantage will shift. The teams and organizations that allow agents to work at lower levels of abstraction, closer to the hardware, will produce software that is faster, cheaper to run, and more efficient by orders of magnitude compared to teams still running their agent-generated code through layers of human-friendly abstraction.

We'll also see new languages emerge that are designed from the start with non-human authors in mind. What that looks like exactly, I genuinely don't know. But I'd expect them to prioritize formal verifiability, explicit behavioral contracts, and machine-readable intent over anything resembling natural language syntax. The goal won't be for a human to sit down and read a file. The goal will be for an agent to produce provably correct, maximally efficient output that a human can understand at the right level of abstraction without needing to read every line.

The disruption nobody Is talking about

Here's what bothers me about where the industry conversation is right now.

We're spending enormous energy debating which current language or framework is best positioned for the agentic era. Python versus Rust. JavaScript versus TypeScript. FastAPI versus Django. As if the question is which of our existing human-approachability tools will win.

That's the wrong frame entirely.

The disruption isn't about which current abstraction layer survives. It's about whether the entire rationale for building thick abstraction layers holds. And I think the honest answer is that it holds for less and less of the stack with each passing year.

This is going to be uncomfortable for a lot of people. Entire careers have been built on expertise in specific languages and frameworks. Vast ecosystems of tooling, training, hiring pipelines, and organizational knowledge are wrapped up in the current stack. Nobody wants to hear that the thing they've spent a decade mastering is being optimized away.

But the history of software is the history of exactly this kind of disruption. Assembly programmers didn't disappear overnight when C arrived. COBOL programmers were still employed for decades after Java showed up. The transitions are slow until suddenly they're not. And the people who see the direction early and start repositioning are the ones who shape what comes next rather than being displaced by it.

What you should actually do with this

I'm not suggesting you abandon your current stack. I'm not suggesting you stop learning modern frameworks or that everything you know is about to become worthless.

I'm suggesting you pay attention to the direction. Watch how agents perform when given more latitude to work at lower levels of abstraction. Pay attention to where the efficiency gains are showing up. Notice when a framework convention that exists to protect human developers from themselves starts to feel like unnecessary overhead in an agentic workflow.

And start thinking about where your value actually lives. If your expertise is in the syntax and conventions of a particular framework, that's a more exposed position than it was two years ago. If your expertise is in understanding systems, defining problems clearly, knowing what good outcomes look like, and holding the human judgment layer that agents can't provide, that's a more durable position than it's ever been.

We spent fifty years building upward, away from the machine, toward the human. The next decade is going to start pulling in the other direction.

That's not a loss. It's a renegotiation. And the people who thrive will be the ones who recognize what was always the point, and what was always just scaffolding.

Doc Norton is Vice President of Delivery at Test Double and has extensive experience in building great software and great teams, including focuses within his career in software development, software architecture, product management, technology leadership, agile coaching, organizational agility, and systems thinking.