Part two of a three-part series on agentic coding and what it means for the profession. Part one made the case that we're in the middle of a sea change. This one asks what that means for how we work. The third post examines ways we need to renegotiate our software abstraction stack.

For decades, we've debated what makes code "good." Variable names. Method length. Cyclomatic complexity. Nesting depth. Coupling. Cohesion. SOLID principles. The list goes on.

And most of it is right. But most of it is also built on an assumption we've never had to question before: that humans are the ones reading, reasoning about, and maintaining the code.

That assumption is crumbling fast.

Craft has always been about humans

Martin Fowler put it plainly: "Any fool can write code a computer can understand. Good programmers write code that humans can understand."

That framing has been a north star for our community for a long time. And for good reason. The principles we've developed around our craft exist for two reasons. First, fit for purpose: does the code do what it's supposed to do? Second, human maintainability: can a person read this six months from now and understand it? Can someone new to the codebase find their way around? Can I debug this at 2 a.m. without wanting to flip a table?

Human maintainability isn't a hidden variable exactly. It's actually the one we talk about most directly with developers, because it's the most immediately relatable. "Write this so future-you can understand it." That lands. That resonates. It's arguably the most accessible on-ramp to good practice precisely because we can reason about it from personal experience.

But it's also so omnipresent in the argument that we've stopped noticing it as an assumption. We've treated it as a given. We call things "good" or "bad" as if those judgments are purely about the code itself. They're not. They're about the code in the context of a human reader.

Take naming. Why do we care so much about variable names? Not because the compiler does. Because humans need to reason about intent. A well-named method is a gift to the next person who has to touch it. A poorly named one is a puzzle they'll have to solve before they can get any real work done.

Or method length. Why do short methods matter? Not because a long one won't execute. Because a human can hold a short method in their head. A 400-line function isn't "bad" in some abstract cosmic sense. It's hard for a person to reason about.

Same with complexity. Cyclomatic complexity, nesting depth, cognitive load: these are all human concerns. We built tools to measure them because we discovered that humans make more mistakes, take longer, and feel worse about code that piles it on.

Fit for purpose is the prerequisite. Human maintainability is what we've been optimizing above that for a very long time.

My first paying gig was teaching this

I want to be clear that I'm not some recent convert to caring about craft. This has been the cornerstone of my career for 35-plus years, since before I had precise language for it.

My first paying gig as a consultant was teaching other developers how to write code "safely" in FoxPro. If you're not familiar: FoxPro was an interpreted language with wonky scoping, loose types, and enough sharp pointy features to let you run very fast with very large scissors. It was entirely possible to write code that worked, shipped, and then became completely unmaintainable the moment the original author left the room. I spent years helping people understand the difference between code that runs and code that holds up.

I've been learning and honing craft ever since, with one consistent conviction: code needs to be first fit for purpose and second fit for humans. That second part has never felt optional to me. It's been as important as the first.

Which is why this next part is genuinely difficult for me to write.

Agentic coding changes the equation

Agentic coding systems don't struggle with long methods. They don't lose the thread at level four of a nested conditional. They don't get confused by a poorly named variable if they have enough surrounding context. They can hold an entire codebase in scope while making a change.

I'm not saying they're perfect. I'm saying the specific failure modes we've been designing around for fifty years are not their failure modes.

So what happens to "good" when the primary author and maintainer of the code isn't a human?

The fit-for-purpose piece still matters, maybe more than ever. Does the code do the right thing? Is it correct? Is it observable enough that we can tell when something goes wrong? Those aren't going away. But a significant portion of what we call craft, the parts optimized for human readability and human reasoning, is on shaky ground.

We're at the very early stages of this shift, and I'm not sure enough people in the industry are reckoning with it yet. More urgently: this isn't a shift measured in years. We need to be thinking in months. It is moving that fast.

The behaviors that survive, and the ones that need rebalancing

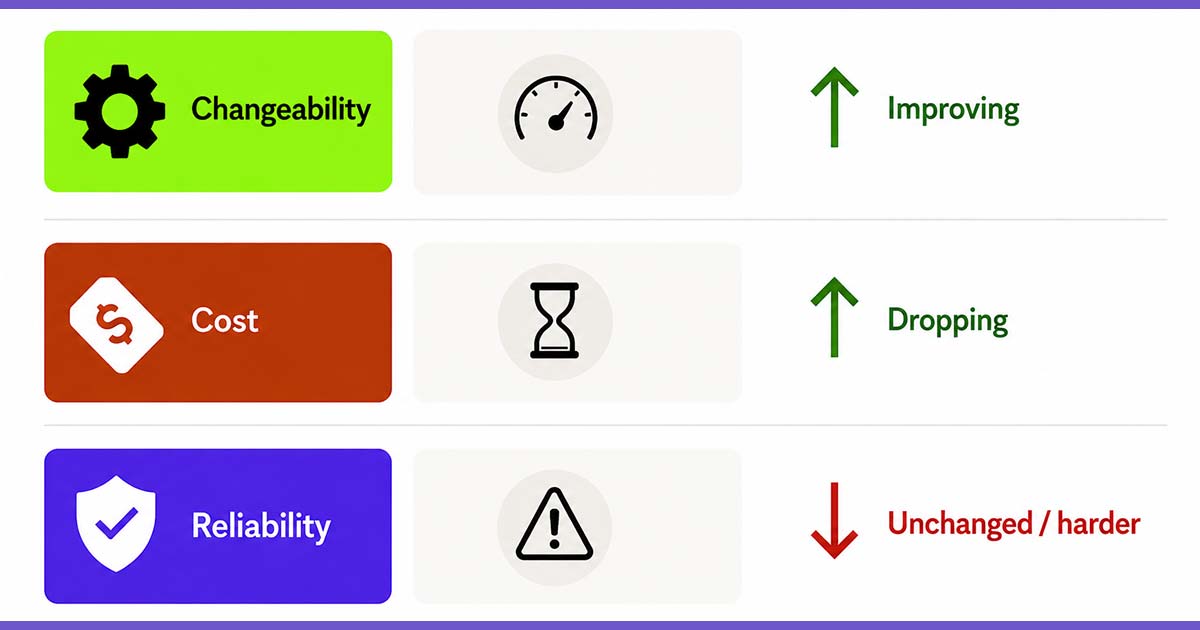

I've spent a lot of time developing a framework around the behaviors that characterize effective teams. Two of them are running headlong into this shift right now: composition and creating simple things in small steps.

Composition, being meticulous about how software components are assembled, has always served dual purpose. Good composition makes systems malleable and observable. It also makes them navigable for humans. The first purpose survives agentic coding just fine. High cohesion, low coupling, systems that are relatively independent and reliable: these matter regardless of who or what is writing the code. The second purpose is getting complicated. The specific conventions we've used to signal compositional quality to human readers are going to need reexamination. The goal stays. Some of the rules we built to serve that goal may not.

Small steps is where the shift gets really interesting, and where I'm doing the most work on my own thinking.

For years, I've advocated for breaking down code problems into smaller pieces. Short methods. Small classes. Narrow, focused functions. The reasoning was sound: small steps leave room for learning, preserve optionality, and make it easier to change direction when you discover something new. That reasoning hasn't become wrong. But I need to be honest that a significant portion of that argument was also rooted in human cognition. Small pieces are easier for people to reason about, test, and modify.

The bottleneck of developer speed is disappearing. Agentic systems can produce robust solutions quickly in ways that would have taken a team of humans weeks. The friction that made "build it small so you can change it easily" such critical advice is evaporating.

But the principle behind small steps was never really about code size. It's about maintaining optionality and creating feedback loops. That part isn't going anywhere. It's just shifting in emphasis.

Small steps: More problem space, less implementation technique

Small steps was always about both the problem space and the implementation technique. But the balance is shifting.

The question used to lean heavily toward: can we break this code problem into smaller pieces so we can learn as we go? The question now leans toward: can we break this problem space into smaller deliveries so we can validate what the market actually needs as we go?

Understanding has always been the primary constraint. Do we know what problem we're solving? Do we know if our solution is actually solving it? Are we building the right thing? Developer speed compounded that constraint by slowing down the feedback loops we depend on to learn. Slower development meant slower delivery, which meant slower validation, which meant slower understanding. The two constraints were entangled.

Now the developer speed piece is evaporating. Which means understanding is exposed, nakedly, as the thing that actually limits us. And agentic coding makes it faster and cheaper than ever to build the wrong thing at scale. That changes the risk profile considerably. The answer isn't to slow down. The answer is to stay disciplined about incrementalism at the product level: releasing smaller, validating more often, treating assumptions about what users need as hypotheses to test rather than requirements to build.

I still want teams creating simple things in small steps. The "simple things" part is unchanged. Unnecessary complexity is still unnecessary complexity, regardless of how fast you can generate it. But the emphasis of "small steps" is shifting from breaking down code problems into smaller chunks toward breaking down the problem space into smaller deliveries that each teach us something.

What should we actually optimize for now?

I don't have a complete answer. I'm not sure anyone does yet. But here's how I'm thinking about it.

The questions themselves have shifted. "Can a human read this easily?" isn't the wrong question, but it's now clearly insufficient on its own, and its importance is waning. The more pressing question is: when something goes wrong, and something will always go wrong, can we tell what happened fast enough to fix it? Can we observe the system's behavior without having to read every line of code it contains? Can we understand what it's doing at a level that lets us take responsibility for it?

That last part matters more than ever. We're still the ones who care when the system fails. We're still the ones who need to understand what happened and decide what to do next. And there are decisions being made in software that have real consequences for real people. Humans need to be able to understand those decisions well enough to own them. That's not a coding standards question. It's an ethics question. But it touches code directly.

Observability and accountability at the system level: those are becoming more important, not less. Here's why. When humans write and maintain code, they're in it. They develop intuition about the system through direct contact with its internals. When agents write and maintain code, humans move up a level. We're above the code, not in it. We lose that intuitive feel, and we need the system to compensate by telling us what it's doing clearly and loudly, through logs, metrics, alerts, and behavior we can trace without reading every line. That's not a weakness of agentic coding. It's just a different relationship with the codebase, and it requires us to be more deliberate about observability than we've ever had to be before.

The risk isn't caring less about quality

This isn't an argument for lowering standards. It's an argument for examining which standards are actually serving the outcomes we care about and which ones are serving a context that's changing.

The instincts developed over years of caring about code quality aren't suddenly wrong. But they need to be held a bit more loosely. The specific rules we've derived from those instincts were derived in a particular context. That context is changing faster than most of us expected.

The risk isn't that we stop caring about quality. The risk is that we confuse the rules for the reasons behind the rules. Rules change. Reasons evolve. Holding the reasons clearly is what lets us figure out what still applies and what needs to shift.

I've spent 35 years believing that fit for purpose and fit for humans were both non-negotiable. I still believe that. I'm just starting to reckon with the possibility that "fit for humans" is going to mean something meaningfully different in the next few years than it has for the last few decades.

Agentic coding isn't the end of craft. It's the beginning of a significant renegotiation of what craft means. And some of what gets renegotiated is going to be uncomfortable, especially for those of us who've built our professional identity around certain convictions about how good software is made.

The sooner we lean into that conversation, the better positioned we'll be to shape where it lands.

So what does "good" mean now? We're going to find out together. And we'll get to a better answer faster if we stop pretending the question isn't changing.

Doc Norton is Vice President of Delivery at Test Double and has extensive experience in building great software and great teams, including focuses within his career in software development, software architecture, product management, technology leadership, agile coaching, organizational agility, and systems thinking.