Editor's Note: Double Agents Anya Iverova (Recruitment), Cathy Colliver (Marketing), and Christine McCallum-Randalls (People Success) attended All Things AI, March 23-24, 2026 in Durham, North Carolina, and shared this field report summary of sessions they attended.

Workshop Day

AI for business professionals

As All Things AI founder Mark Hinkle of Peripety Labs led the AI for Business Professionals workshop, he emphasized the importance of building on the foundations of what make us human: judgement, empathy, and trust. Much of the workshop covered the practical application of moving from tools thinking to systems thinking with AI. Mark provided demos and food for thought, and it was a great learning experience for all three of us.

Fireside Chat: The Past, Present and future of AI

Igor Jalokov, Pyron & Mark Hinkle, Peripety Labs

Igor Jakov is no stranger to AI. He’s the brain behind the Yap multi-modal models that turned into Amazon’s first AI acquisition and were foundational to what we know today as Alexa, Echo, and Fire TV.

Igor spoke on the seeming dichotomy of the persistent strength in humanity and the multiple disturbing possibilities should bad actors take control of technology for harmful purposes.

“AI gives you what you want. Humans give you what you need.”

Multiple things can be true at once:

- Uncomfortable truths of how some companies emerged as forerunners by bending or flaunting norms

- Wildly useful multi modal models Igor worked on before most people had AI on their radar

- Deeply impactful things that make us human, and why it’s important that humans are holding the wheel and driving, not the other way around

Opening Keynotes

When AI meets quantum: Expanding the boundaries of machine intelligence & what agents can really do

whurley, Strangeworks

whurley opened with a reality check: most people who say they understand quantum computing are overstating it. The practical barriers are real, but access and abstraction layers are key to enabling software developers to make the leap to quantum development. Meanwhile, progress is compressing fast: the number of qubits needed to break encryption is shrinking, and the potential applications in healthcare and materials science are significant.

whurley framed AI and quantum computing as organically compatible. AI imitates nature while quantum computing uses nature as its substrate. AI agents will accelerate quantum progress and optimization in the near term, with quantum-native agents as a future possibility. whurley expands on these ideas further in his talk, The Power of a Billion Minds.

Who does the algorithm save: Preserving humanity with AI in healthcare

Yassah Reed, Intercom

Yassah Reed compared two AI-enabled algorithms in healthcare that illustrate vastly different outcomes. NarxScore uses machine learning to predict potential pain medication misuse. But it also exhibits bias against women, people with chronic disease, and patients at teaching hospitals, generating false positives that pressure doctors into withholding care for fear of medical board scrutiny. TREWS, developed at Johns Hopkins, monitors patient records to detect early sepsis warning signs and has meaningfully reduced one of the leading causes of in-hospital death.

The contrast between the two surfaces critical considerations for any AI implementation:

- Basis: predicting future behavior vs. analyzing physiological signals

- Data: law enforcement-oriented data vs. historical patient medical records

- Training: minimal personalization vs. personalized deployment

- Transparency: proprietary algorithm vs. open methodology

- Human Role: gatekeeper (pressured to comply) vs. champion (empowered to act)

Darko Mesaros, AWS

Darko demonstrated work on AWS Strands Agents, focusing on how agents can share persistent memory across interactions. The pattern involves one agent storing user preferences and context via a memory and session ID, while a second agent retrieves that memory to provide personalized recommendations—all frictionless from the user's perspective. The example was straightforward: tell one agent your preferences, then have a separate agent use that stored context to make tailored suggestions.

Darko also highlighted AgentCore Gateway, which lets you treat any API as an MCP server, and AgentCore Browser, which enables agents to simulate browser interactions. This expands the surface area of what agents can access and act on.

How the oldest principles in engineering will reshape AI

Luis Lastras, IBM

Luis Lastras argued that production AI failures are fundamentally a decomposition problem, not a model problem. When an AI system is effectively one massive prompt (huge context plus instructions) it becomes nearly impossible to troubleshoot. The oldest engineering principle applies: take a complex system, define its parts, and refine them. This thinking reshapes how we approach not just models, but prompts and how they function together.

Luis introduced Mellea, IBM's approach to enforcing declared constraints at runtime. Rather than relying on prompt instructions like "do not hallucinate," Mellea provides structure through code and owns the iteration loop on drafts. A key finding: modularity makes small models perform like big ones, with lab tests across Granite and Llama showing the approach scales across model sizes. For enterprises, this points toward a safer, more secure path to leveraging AI with their own data, and Lastras framed modularity not as a tradeoff, but as the design itself.

AI Builders

Beyond the AI hype: Building durable agentic AI application

Achin Gupta, Netskope & Divya Mahajan, Amazon

Achin Gupta and Divya Majan identified the "four horsemen" of AI project failure: trying to put AI everywhere, accepting that it works only sometimes, framework lock-in, and demo-driven development. These are predictable human traps, and the antidote is a single meta principle: build for the problem, not for the stack.

Achin and Divya shared an Agentic Applications Delivery Framework with four phases:

- Define starts by scoping functional capabilities and constraints, then applying a necessity filter (does this actually need an LLM, or would an API call or database lookup suffice) and assessing blast radius for risk.

- Design establishes primitives and minimum viable architecture.

- Evaluate tests and hardens with built-in swapability.

- Define core primitives and shared vocabulary across the team.

A key concept is the "inflection point staircase," where the goal is finding the lowest level that actually passes evaluation. If evaluation fails, you step back down the staircase rather than layering on more complexity.

AI Users

Adopting AI-augmented knowledge work

Clayton Chancey, Praecipio

Clayton Chancey presented a practitioner's framework for AI-augmented knowledge work, centered on a key distinction: apply AI to the way you actually work, not how you wish you worked. The framework starts with surfacing your real cognitive process step by step, then breaking work into distinct phases and honestly assessing which ones you can supervise. That self-assessment determines whether you're in production mode or learning mode for each phase. And phase handoffs should be treated as checkpoints, since errors in early phases cascade downstream.

The complementary piece is disciplined prompting: be explicit and specific, use XML tags to structure context and instructions, provide examples rather than lengthy descriptions, explain the why behind instructions, break complex tasks into focused steps, and match tone to desired output. Data integration matters, too. Your cognitive process is only useful if you can actually get the right data into context, so mapping where data lives and matching friction to frequency is essential. AI wrappers alone don't solve the problem—understanding your own workflow does.

AI in the Enterprise

Enterprise AI, right-sized: Why small language models (SLMs) deserve serious attention

Don Shin, CrossComm

Don Shin made the case that small language models (SLMs) (e.g. Gemma, Qwen, and Phi-4) deserve serious enterprise attention. The right-sizing framework matches model size to task complexity: SLMs hit a sweet spot for classification, extraction, and summarization, while LLMs handle more open-ended work. The advantages compound for enterprises: self-hosting drives down cost and latency, data never leaves your infrastructure enabling simpler HIPAA/SOC 2/GDPR/FedRAMP alignment, and you own the full audit trail. Deployment flexibility spans edge, on-prem, and cloud with container-native infrastructure and no vendor lock-in.

Practical use cases included document intelligence across millions of documents (faster, cheaper, and more secure than LLMs), agentic coding where SLMs handle scoped tasks like bug fixes and test generation at sub-100ms with zero data exposure, and customer-facing AI delivering brand-safe responses under 200ms. The bigger architectural pattern is a hybrid router: an LLM orchestrator delegates routine, predictable requests to fine-tuned SLM sub-agents. Shin's recommended evaluation path is straightforward: audit your AI workloads, identify SLM candidates, benchmark against your data, calculate the business case, and start small. Use APIs to experiment, then self-host for production at scale.

Ankit Dheendsa, Morphos AI

The reality of AI no one is talking about

Ankit Dheendsa challenged the industry to solve root problems rather than symptoms. We're entering the age of inference, but the second-order effects (enormous data centers, energy consumption, latency at enterprise scale) are real blockers to mass adoption. His core metaphor: we're building Lamborghinis and driving them on dirt roads. The infrastructure underneath AI needs as much attention as the models themselves, and CTOs should be championing investment in AI infrastructure over AI products.

The actionable thesis centers on what's actually in our control. Rather than training bigger models and buying more GPUs, Ankit argued for rethinking the approach entirely: armies of small, task-focused agents working in tandem, radical focus on data fidelity to reduce what needs to be stored and processed, and pushing memory to edge devices to regain privacy and run on cheap hardware.

AI at the Speed of Trust

Greg Boone, Walk West

Greg Boone framed AI adoption as fundamentally a trust and culture challenge, not a technology one. Greg pointed to Martec's Law—the gap between exponential technology change and logarithmic organizational change—as the core tension companies face, and introduced a "4-IN Framework" for building AI-ready culture: Intelligence (foundational literacy), Innovation (small high-value use cases), Inspiration (creative energy), and Infrastructure (governance and tooling at scale).

A central theme was that human skills aren't going away, citing McKinsey research that 72% of human skills remain essential in the AI era, anchored around curiosity, creativity, critical thinking, communication, and collaboration. To close the adoption gap, Greg's team gamified the learning curve, achieving 100% participation in week one and 152 certifications across 360 hours invested in just three months. The takeaway: making AI exploration psychologically safe and even fun is what breaks the fear barrier and gets organizations moving.

Maximizing value from Enterprise tech in the age of AI

James Kaplan, McKinsey

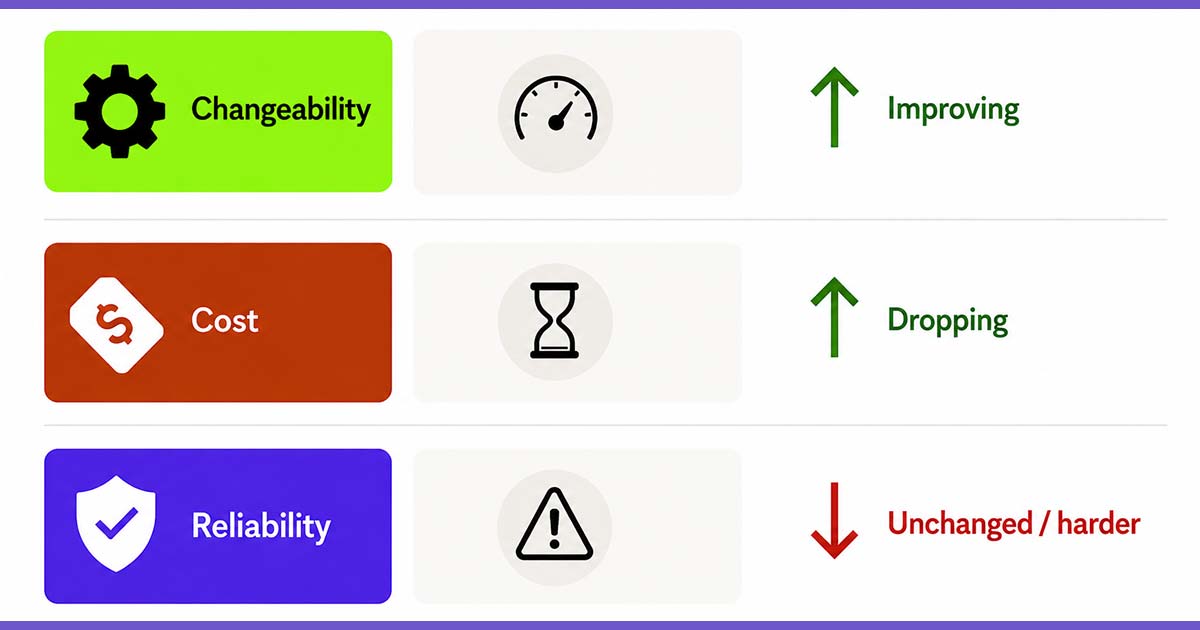

James Kaplan made the case that most enterprises are stuck in an "IT doom loop," spending money on the business rather than investing in it. The gap between technology aspirations and actual progress is widened by misaligned incentives and non-cooperation from business leaders. He outlined six imperatives for reinventing enterprise technology: recalibrating to new IT economics, rebuilding platforms with AI, renovating enterprise data, redesigning talent models for human-agent collaboration, revamping vendor relationships to avoid lock-in, and remodeling risk and resiliency.

A recurring theme was the coordination breakdown between technology and business leadership. Only about 1 in 10 CIOs report effective support from business partners across key interactions. James argued that CIOs and CTOs need to think like game theorists: quantifying the EBITDA impact of non-cooperation, presenting options that force real trade-off decisions, creating transparency through leading indicators, and signaling credibility by demonstrating success within IT before asking business units to change. Systems thinking and strategic framing, not just better technology, are what unlock enterprise value from AI investments.

Building Your AI Factory

Marc Sirkin

Marc Sirkin talked about a practical, friction-first approach to AI adoption. Rather than starting with tools, Marc argued teams should identify where work is slow, fragile, or dependent on heroics—then build around that. The recommended starting point is deceptively simple: get your company context, ICP, and key processes out of your head and into documents, even using AI as an interviewer to help surface that knowledge.

From there, Marc laid out a four-week build-test-scale cycle: map friction and collect content in week one, build one narrow component in week two, test it in a live workflow in week three, then review, refine, scale, or kill it in week four. He emphasized that common mistakes include automating broken processes, starting too broad, and skipping success metrics. Every implementation should be measured against time saved, throughput, quality, or consistency. And if it doesn't reduce the original friction, fix it or kill it.

To RAG, or not to RAG?

Owen Chen, Fidelity Investments

Owen Chen framed the RAG question through the lens of financial services, where everything must be auditable and compliant. The core need is straightforward: LLMs don't know your company's specific business and procedures, so you augment with company data via a vector database ingestion pipeline and a real-time inference pipeline. Pre-processing, chunking for token limits, and data hygiene tasks like expanding acronyms are essential, and good fits for SLMs. Owen highlighted combining lexical and semantic search for better retrieval ranking, and noted that in regulated industries, you can't just present answers directly; compliance and safety filtering layers are required.

On whether you actually need RAG now that context windows are expanding toward 1M+ tokens: yes. Simply stuffing everything into a large context window hits real limits: cost, latency, the "lost in the middle" phenomenon, and accuracy dropping from 93% at 125K tokens to around 21% near 1M. Auditability and access control aren't feasible with context-only approaches either. Chen's best practices for scaling enterprise AI emphasized safety-first design: kill switches for agents, supervisory layers with human-in-the-loop, continuous evaluation frameworks with SME review, and keeping documentation concise and focused for LLM consumption.

AI Executives

Is the future forked?

Micaela Eller, Ernst & Young

Micaela Eller argued that organizations spend too much time on technological readiness and not enough on the enablement scaffolding that helps people actually use it. The starting point is identifying where people are getting blocked in their processes and building systems that make it easier to do the brave thing, rather than expecting individuals to push through friction on their own.

Micaela’s framework drew on servant leadership as the model for AI enablement: balance the plumbing (effective techniques and infrastructure) with the poetry (inspiration and culture). That means empowering trust through service, driving innovation through open collaboration, building a knowledge-sharing culture, and creating space for experimentation. The throughline was that durable adoption comes from designing systems and culture around people, not just deploying technology and hoping for readiness.

AI Governance, Security & Enterprise

Orchestrate agentic AI: Context, checklists, and no-miss reviews

Calvin Hendrix-Parker, Six Feet Up

Calvin Hendrix-Parker tackled the nondeterministic nature of AI tools head-on — noting that most business work is nondeterministic too. The path to reliable outputs requires quality inputs, constraints, dialectic review, and visibility. Calvin’s live demo illustrated the risks: naive analysis requests waste tokens on XML noise from doc files, the "fuzzy middle gap" silently skips content without warning, and the same prompt can produce different results depending on how token limits are hit. The uncomfortable truth: people at your company are already doing this with whatever tools you give them.

The key principle is that context should be treated as a workspace, not a warehouse — context degrades over time and bloated inputs produce faulty outputs. Calvin’s agent architecture approach keeps things contained: a context buffer connected to an LLM, local tools with skills and subagents via MCP and hooks, local resources like flat files and databases, all running in a sandbox with limited local machine access. The emphasis was on building structured, constrained environments that make agentic AI reliable rather than hoping unconstrained prompts will produce consistent results.

Check out Calvin’s GitHub repo for the demo tool.

AI Deep Thoughts

The future of AI and Durham

Mayor Leonardo Williams, City of Durham

Mayor Leonardo Williams positioned Durham's economy as naturally aligned with AI, anchored in healthcare and life sciences, education and research, advanced industries, and knowledge work. Durham was recently ranked the #2 most resilient, future-ready city by SXSW. Mayor Williams framed AI as augmented intelligence rather than artificial intelligence, emphasizing that the biggest opportunity isn't new companies but existing ones adopting AI to improve.

Mayor Williams stressed that inclusive transition is essential, noting that for the first time five generations are living and working together. The cities that win in the AI economy will be the ones that collaborate best. The conference itself served as proof of Durham's momentum. Organizers expected 1,000 attendees and ended up with around 2,500, with the turnout potentially fueling investment in expanding the Durham Convention Center.

Predictable Jokes: Comedy for AI builders

Evan Wimpey

You all, we’re not even going to try to recap this, except to say it was delightful.

Owning the inference layer

Taylor Smith, Red Hat

Taylor Smith used a car analogy to frame the spectrum of AI model deployment options.

- Using an LLM subscription is like taking a taxi, convenient but with less control and no privacy

- A dedicated endpoint or managed service is like leasing, with more control, but you don't own the infrastructure

- Running models on your own infrastructure is like owning the car, with full control across cloud, on-prem, or air-gapped environments, but with significantly more responsibility around scaling, serving, hardware utilization, and latency

Taylor’s point is that running your own models has become newly viable. Model capabilities have expanded enough to make self-hosting feasible, and infrastructure advances in LLM inference servers and accelerators have made it performant. For enterprises weighing the build-versus-buy spectrum, the tradeoff between control and responsibility is real, but the option to own is more practical than it's ever been.

North Carolina: Community impact focus

Sarah Chick, NPower

Sarah Chick posed the central equity question of AI adoption: as AI transforms the future of work, who gets to participate? NPower, a tech education non-profit focused on historically underutilized talent, works to illuminate pathways into technology careers. Without intentional guidance, AI's benefits flow to those who already have proximity to the industry while leaving many others behind.

Sarah’s message was clear: if we want AI's future to be for everyone, everyone has to be involved in building it. Many communities have incubators, ecosystem builders, and organizations like NPower that expand access and support. Finding and getting involved with one, whether through volunteering or other support, is a practical way to help close the gap.

Open source meets AI

Keith Bergelt, Open Invention Network

Patent litigation hit an interesting conundrum when open source emerged.

IBM and Red Hat, among others, architected an approach to collaborate and build on each other’s ideas, cross-license each other, and fuel innovation.

There are now 4,200+ community members from startups to large corporations and over 3 million total patents and apps owned by OIN licensees.

WTF AI

Chisa Penix-Brown, Give it to the People

WTF is how you’re feeling about AI from time to time.

Chisa Penix-Brown brought energy and practicality to a session anchored in a simple truth: most people don't have an AI problem, they have a clarity problem. Chisa demonstrated this with a hands-on exercise: volunteers received identical instructions to fold and tear a piece of paper, yet every result was different based on individual interpretation. The lesson: vague inputs produce blurry results, wasted time, and wrong tool use. Everything you put in influences what you get out.

Chisa’s framework—Write, Think, Flow—breaks down how to fix that. Write means getting clear on what you actually want by providing context, task, format, and tone. Think means building the conversation with power questions: what's missing, how can the prompt improve, what alternative approaches exist. Flow means turning AI into a repeatable system rather than a struggle. Check out Chisa’s podcast.

Community is infrastructure in the age of AI

Dena Guvetis, American Underground

Founders are choosing proximity. American Underground, based in Durham, now has over 650 members, and has helped thousands of startups get going. Dena argues that community isn’t where you happen to be, it’s how you win.

Someone mentions a problem at lunch. Another person knows someone and makes an introduction. The business is able to move forward. This isn’t networking, it’s infrastructure. And it’s becoming more important, not less in the age of AI.

Building AI Champions

Boz Vitanova, TeamLift

Boz spoke about how to identify and build up AI Champions internally to not only increase AI adoption, but also get the most out of it.

“The companies winning with AI aren’t the ones with the best tools. They’re the ones with the best people using them.”

What makes a good AI Champion:

- They can break any task into steps

- They already have informal workarounds nobody else knows about

- They instinctively think about what could go wrong

- They can describe what ‘good output’ looks like for their work

- Everyone asks them when they’re stuck

Closing Keynotes

AI’s impact on the startup ecosystem

Chris Heivly

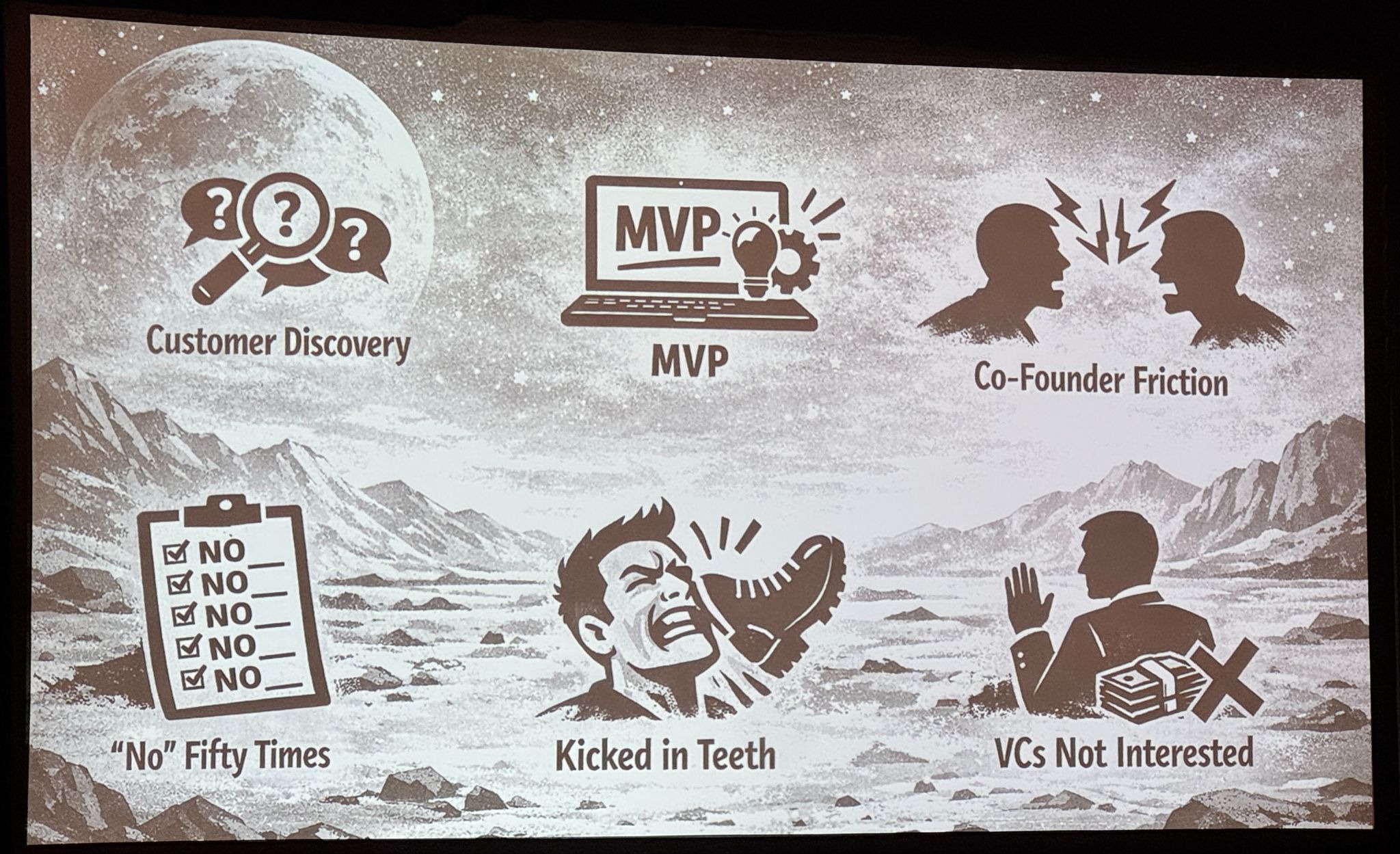

Chris Heivly reminded us that AI isn’t a cheat code for entrepreneurship:

“You can’t skip ahead to the top of Everest, you have to go through the base camps. You can’t get to year there without going through month three.”

The same principles for startups still apply and founders must be prepared for:

- Customer discovery

- MVP

- Co-founder friction

- “No” fifty times

- Kicked in teeth

- VCs not interested

When intelligence gets cheap

Ben Heller, Microsoft

Ben Heller framed the AI moment through Jevons' Paradox: when the cost of intelligence drops, demand doesn't decrease, it goes up. The result isn't less work, but more ambition. Our ceilings have historically been set by the cost of human intelligence, and cheaper artificial intelligence expands what's possible rather than simply replacing what exists.

Ben’s practical advice for organizations was to bring shadow AI usage into the light through an orchestrated path within governance parameters. The approach is three steps: enable, govern, orchestrate. But the sequence matters. Shape your organization first before scaling adoption, rather than chasing tools and hoping governance catches up. Ben also used a killer Pokemon analogy about evolving.

The era of infinite language

Erkang Zheng, ariso.ai

Erkang Zheng argued for a mindset shift from AI as replacement to AI as leverage. Ask not what AI can do instead of us, but what it can help us accomplish that we couldn't before. Erkang pointed to a concrete example: an autonomous startup running with a founder-operator agent and coding agent that built a live business with over 4,000 customers at $300k ARR.

The broader shifts Erkang outlined reframe how organizations should think about AI: from tools to teammates, from more layers to more leaders where every leader becomes a player-coach, and from being busy to being human. The leverage AI provides isn't about doing the same work faster. It's about freeing people to focus on judgment, decision-making, creativity, and empathy.

Amazing, heartfelt thank yous

Todd Lewis, All Things Open

Todd’s wrap-up remarks and personal thank you comments to speakers could have been generalized. Instead, with each of the afternoon keynote speakers he introduced he shared a very personal thank you from the heart about how their support was meaningful to him and the All Things Open/All Things AI ecosystem. As he expressed gratitude, Todd highlighted how each of them showed up as empathetic humans.

And that is probably the most important learning from the whole conference: The way each of us shows up as individual human beings is profound and important, no matter how technology advances, shifts, and evolves.

Cathy Colliver is Head of Marketing at Test Double, holds an M.B.A., and has expertise across strategy, brand, content, and marketing ops at startups, mid-size companies, and enterprise.

Christine McCallum-Ranalls is a Director of People at Test Double, and has experience in employee relations, policy development, and culture building.