Automated testing is a point of contention in the software industry. We debate whether or not it's required, and then we debate exactly what it should look like. I believe that automated testing is an essential part of modern software development.

Conveying exactly why has been a challenge for me in the past. As a less experienced developer, I focused a lot on the technical specifics and the perceived benefits rather than exactly why I held that belief. This article is another go at making my case, but by reframing my argument around the limitations of manual testing.

It's actually fairly short. It boils down to: manual testing has value, but it can't keep up with feature complexity.

Interested? Let's explore the relationship between manual testing and feature complexity, then we'll dive deeper into the consequences of it and talk about why automated testing helps with that dynamic without fully replacing manual testing.

Setting the stage

Let's imagine we're at a standard company producing software. Here are the following assumptions I'm making:

- Software quality matters to us. We don't want new releases to contain big defects and there's a quality assurance process to help with that.

- We're not in hyper-growth. Hires are an exception rather than the norm and our teams have to make do with the people they do have.

- Changes can cascade. We have a complex system servicing our needs. That means code changes can have unexpected and wide-ranging impact across that system because new features are built on top of old ones. To avoid defects getting through the cracks, we'd rather test a larger set of features.

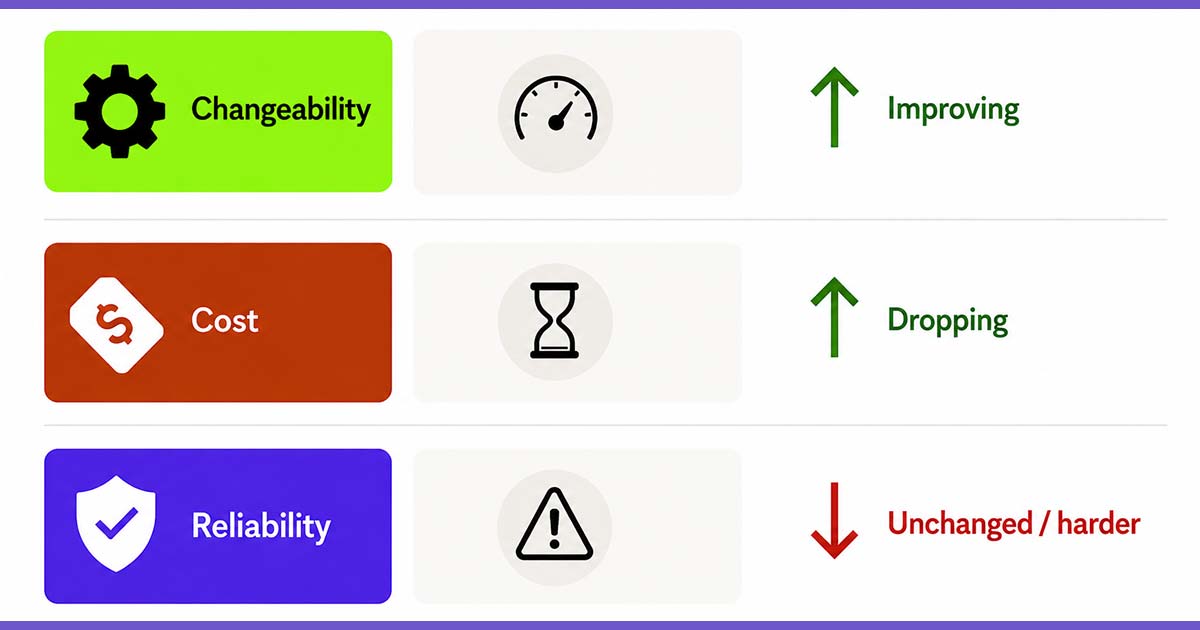

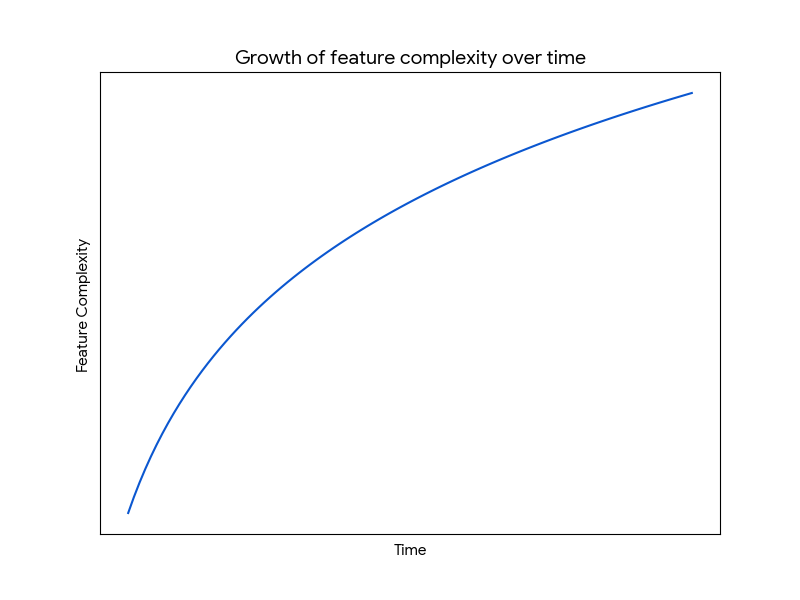

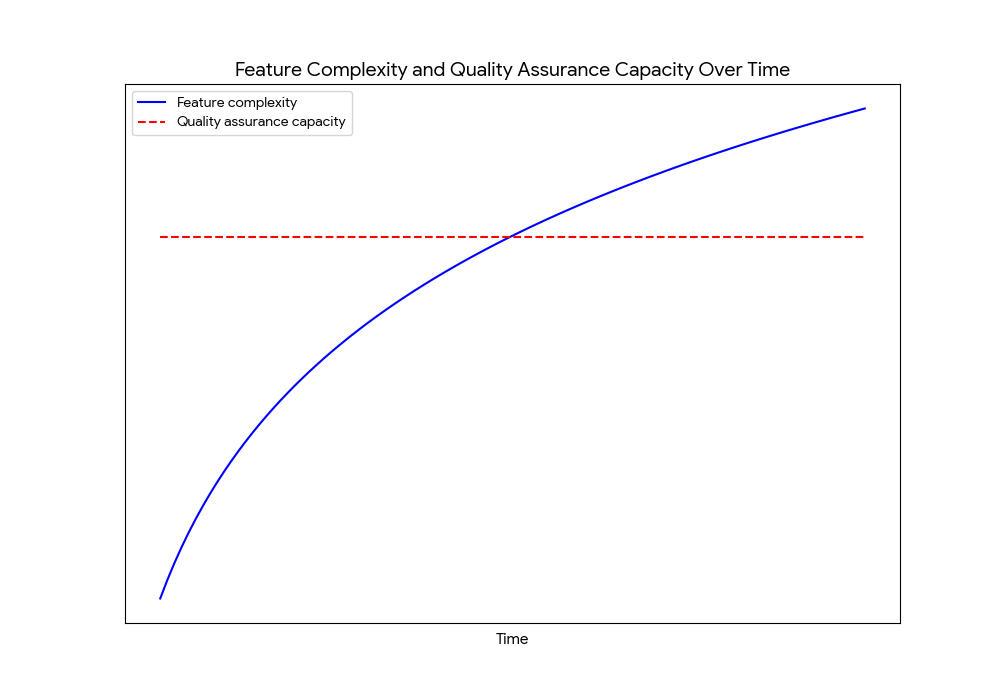

Now we're working on our product and developers are writing new features every few weeks. As time passes, there will be more and more features in the system. A lot of features will also depend on previous ones. All in all, the feature complexity of the software will keep growing over time.

What does our quality assurance capacity look like? It might be dedicated testers or maybe developers have a budget of time for quality assurance before releasing. Either way, we end up at the same place: the quality assurance capacity is fixed.

The testing burden increases over time

You've probably guessed where we're going. Let's combine these two charts and talk through the story depicted by the new chart.

For a while, quality assurance isn't even close to a concern. We can have our cake and eat it too by producing a lot of functionality and testing it thoroughly.

Eventually, we'll hit a balance between the complexity of the software and our capacity to test it. We won't even realize there's a downhill slope right around the corner because there isn't any direct consequences yet and the warning signs are easy to ignore. Quality won't appear to be slipping and bug counts won't really be up.

Complexity will eventually pile up. Features will start interacting in unforeseen ways. The effort required to properly test it all will hit a turning point because there's only so many hours of manual testing available. The gap between the complexity of our software and our ability to test it will have grown fairly large by the time we see quality dipping to a noticeable degree. The software will start feeling more and more like a Jenga tower as the team keeps stretching farther and farther beyond its natural quality assurance capacity.

The worst part? These quality concerns will only be the tip of the iceberg.

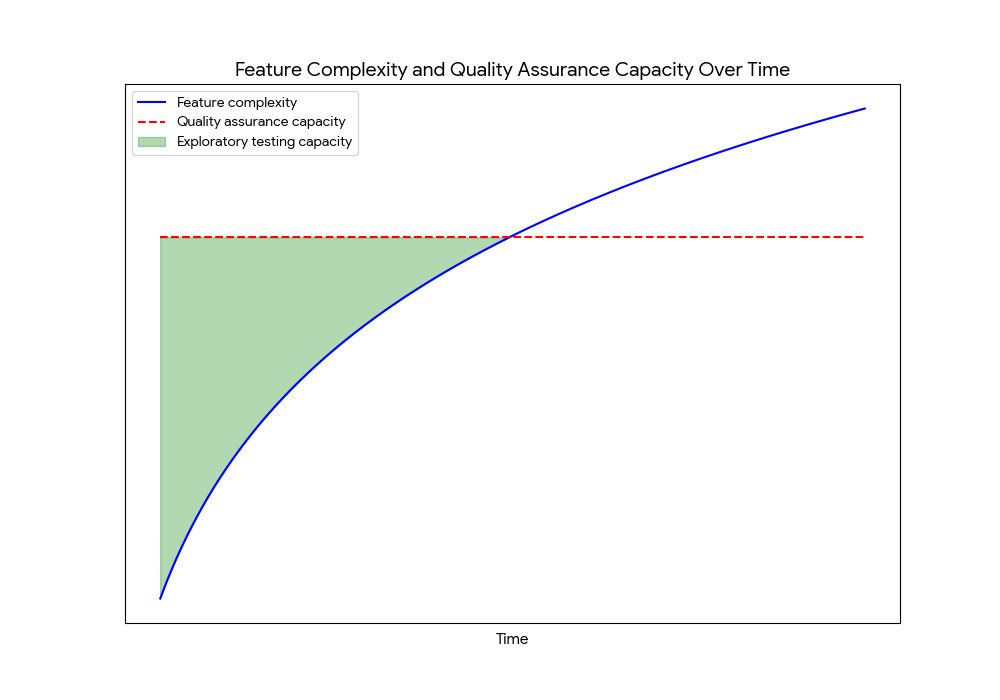

Why? We'll be so busy looking for old bugs that we won't have time to look for new ones. There won't be any time left for exploratory testing.

The loss of exploratory testing

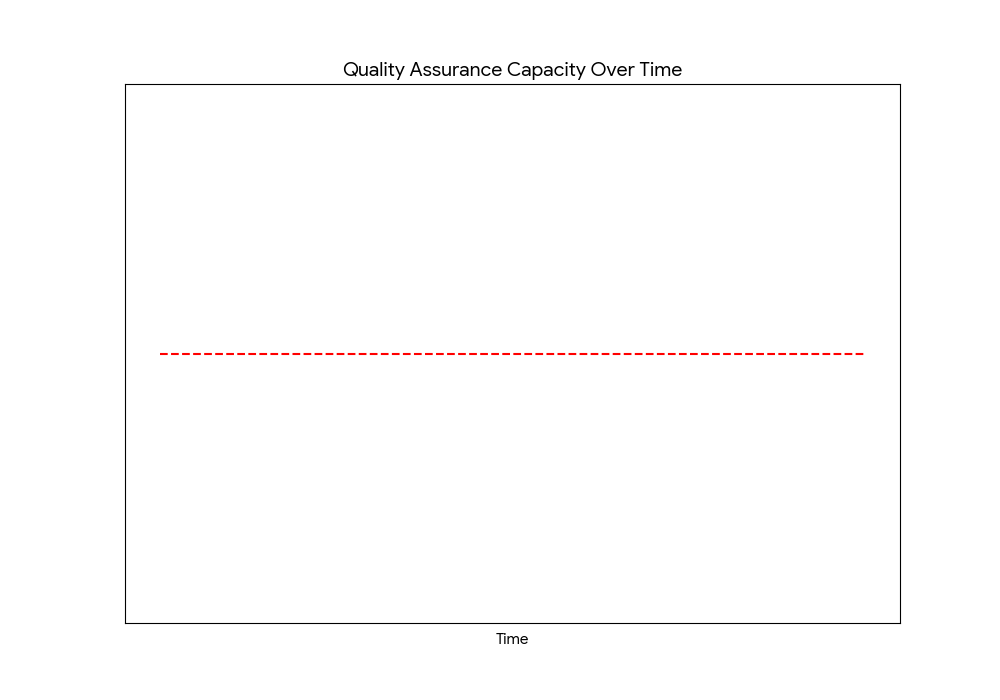

There's a shift that happens once we're stretched thin when testing. We try to optimize our time and go through test cases at blazing speed so we can test everything in time for the next release. That means we limit ourselves to regression testing: looking for previously seen or anticipated bugs.

Another angle to quality assurance that we haven't covered yet is exploratory testing: looking for new and unexpected bugs. It requires large blocks of uninterrupted time because finding these can only be done through unscripted exploration and building a deeper understanding of the software. Of course, large blocks of uninterrupted time to find potential bugs we haven't seen before is a hard pill to swallow if we're pressed for time just running all the regression tests. Exploratory testing will seem like a waste of time at that point.

Even if we manage to dedicate time for it, remember that the amount of features in the system is still constantly growing. How long will we be able to preserve that time when there's an ever-increasing amount of test cases that aren't being covered?

Let's try and visualize our capacity for do exploratory testing in our graph:

The influence of AI in manual vs automated testing

Is all of the above still relevant in the age of AI we're currently living through? Absolutely, because one of the biggest benefits attributed to AI is making developers more productive at writing code.

Let's say our developers start writing twice as many features. The direct consequence here is that we'll have to test twice as many things. We'll hit the limits of our testing capacity twice as fast and the software will keep getting more and more complex way past that capacity at a much higher rate.

Essentially, it exacerbates how much manual testing can't keep up with feature complexity.

Adding automated testing

Now that we've talked through the limitations of manual testing compared to the expanding complexity of our software system. Why would automated testing help?

While the time for manual testing isn't a thing we can steadily increase, automated testing capacity is. Because it's software, an automated testing suite can grow and expand quite in the same way that the features we build will stack up over time.

By its nature of being especially good at repeatedly running the same test cases, automated testing can take much of the burden of regression testing. That's an amazing thing because it saves time for humans to do the things an automated testing suite simply can't do like exploratory testing.

Keeping the right type of manual testing

Automated testing is always going to be backwards-facing because it'll be running through test cases for issues we've predicted or have seen before. That's exactly where it excels: regression testing.

Does that mean we should stop manual testing entirely? Not exactly, teams only running automated tests without occasionally exercising their systems by hand will miss issues. We still need exploratory testing to find the truly new and unexpected bugs. It's the forward-looking approach where humans still excel.

You might think this undermines my whole argument for automated testing, but I'd argue that it supplements it: we're taking advantage of the best of both worlds. Automated testing handles the time-intensive, repetitive test cases while humans only spend a fraction of the time doing the valuable work of looking for unexpected interactions and issues caused by new features.

Getting the most out of automated testing

While we won't dive deeper into the specifics of automated testing, here's a few things to think about to get the most of automated testing.

Automated testing is a wholly different skill than manual testing or writing production code. While some competencies will transfer, it requires a different mindset and it takes time to build competency in it. It will take time for individuals and teams adopting automated testing to properly engage with that new skill before they get the most benefits out of it.

Adopt Automated testing earlier rather than later. The worst case we're looking to avoid here is adding automated testing when we're in the rightmost part of our graph because that's when we'll invest the most effort for the least benefits. It's much harder to retrofit software to be testable down the road. Plus, it takes time for an automated test suite to grow and mature enough to reap larger benefits.

Figure out the right automated testing setup for you. The internet is littered with best practices and test types you "need" to adopt. Take the time to reflect and experiment to figure out what will work best for your own environment and context.

Automated testing is a journey, not a destination. Especially with AI nowadays, it might be tempting to use its magic wand to generate all the tests at once for you and call it a day, but that would be missing the point. You'll get the most value out of automated testing once your suite has been refined over time and you've built solid human expertise in it. Even then, there will still be a road of further improvements ahead.

Doing automated testing well is hard

I hope that this provided you with a helpful mental framework when advocating for automated testing. Manual testing can find issues automated tests never would, but it simply doesn't scale on its own. Done well, automated testing will support your capacity to consistently produce quality software.

Once you've got people onboard, the next challenge will be doing automated testing well. Just like everything in software development, you'll need to think deeply about your context and your needs when investing in it to really get the most of it. There's no single perfect way of doing it because it's a deep and nuanced technical subject. It is however something we have a lot of insights about.

Want to talk through what automated testing might look like for your context? We offer free pairing sessions where we could brainstorm through it together!

Gabriel Côté-Carrier is a senior software consultant at Test Double, and has experience in full–stack development, leading teams and teaching others.